Appendix E. Batching and throughput-oriented execution

In the code we implemented throughout the main chapters, we usually process one prompt or example at a time. This keeps the code compact and easier to understand, and it also helps keep the resource requirements somewhat more manageable.

In practice, the code in this book is already expensive to run, so adding batching support everywhere would often increase complexity without any real benefits in many cases, depending on your available hardware.

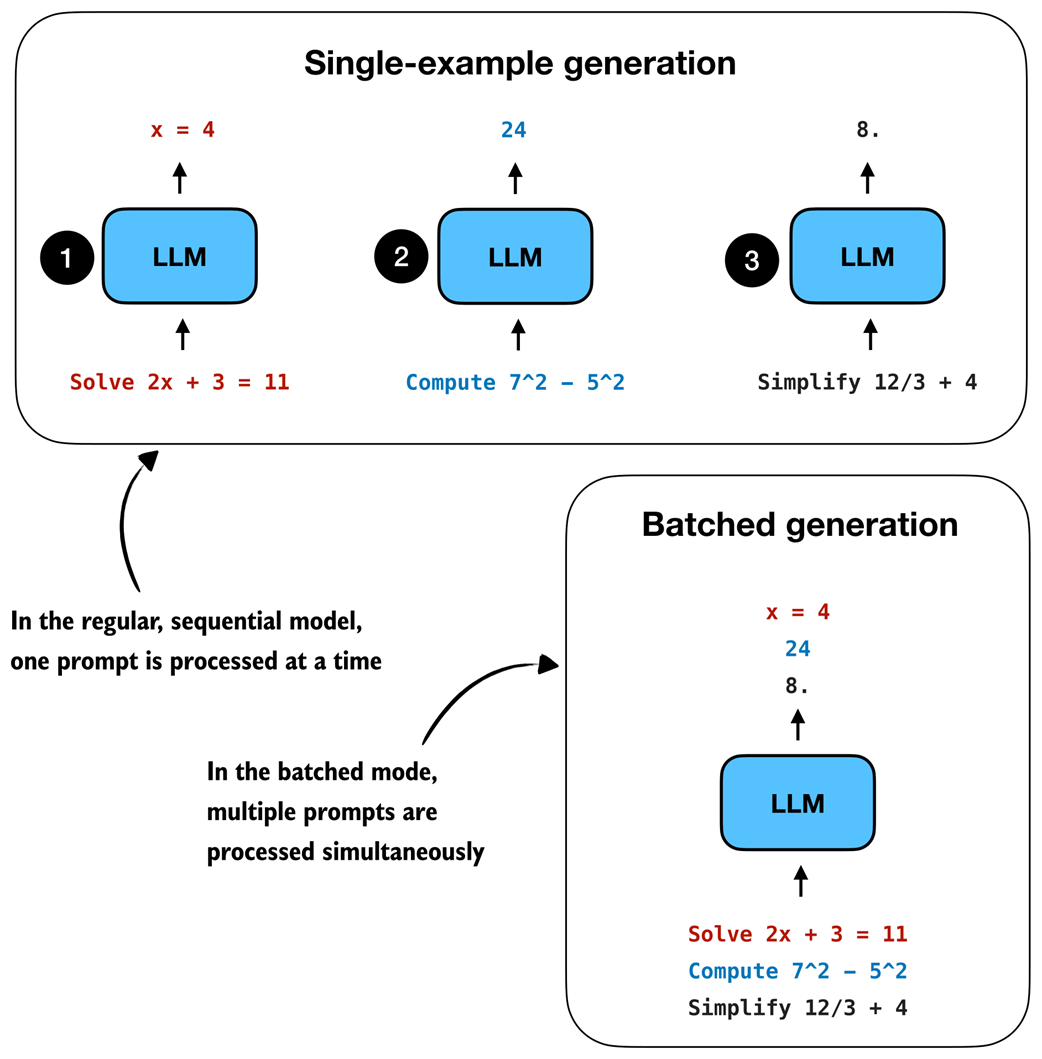

That being said, if you have access to relatively modern GPUs with enough memory, batched execution, illustrated in figure E.1, is very useful in some settings. For example, if we want to evaluate many problems, sample several responses per prompt, or train on multiple examples at once, then batching can help increase the throughput and lower the overall time.

This appendix explains the broad idea behind batching and shows how to use the batched implementations in the supplementary materials across different chapters.

Figure E.1 An overview of single-example versus batched generation. In batched generation, several prompts are packed into one batch, processed in parallel, and then decoded together to improve throughput.

E.1 Why batching helps

When we talk about computational performance, it helps to separate between the following overall goals:

- latency: how quickly we get the answer for one prompt;

- throughput: how many prompts we can process in a fixed amount of time.