2 Generating text with a pre-trained LLM

This chapter covers

- Setting up the code environment for working with LLMs

- Using a tokenizer to prepare input text for an LLM

- The step-by-step process of text generation using a pre-trained LLM

- Caching and compilation techniques for speeding up LLM text generation

In the previous chapter, we discussed the difference between conventional large language models (LLMs) and reasoning models. Also, we introduced several techniques to improve the reasoning capabilities of LLMs. These reasoning techniques are usually applied on top of a conventional (base) LLM.

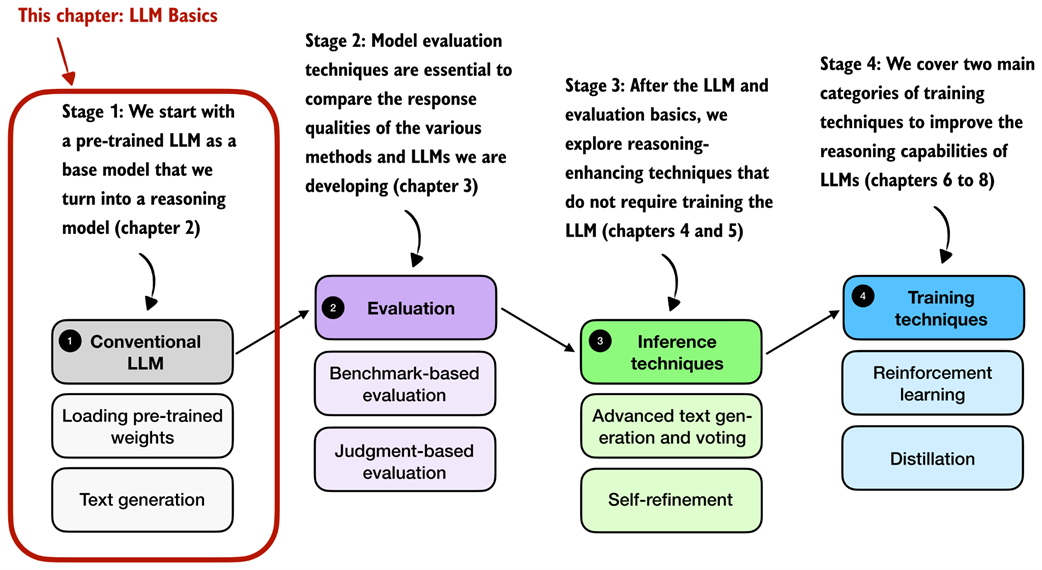

In this chapter, we will lay the groundwork for the upcoming chapters by loading a pre-trained base model, as illustrated in figure 2.1. Previously, we discussed how reasoning methods are often added after the usual post-training stages. However, starting from a base model makes it easier to see which capabilities come from the reasoning methods themselves. In other words, the conventional LLM in this chapter is a non-reasoning LLM, and more specifically a pre-trained base model rather than an instruction- or preference-tuned assistant.

Figure 2.1 A mental model depicting the four main stages of developing a reasoning model. This chapter focuses on stage 1, loading a conventional LLM and implementing the text generation functionality.