chapter six

6 Training reasoning models with reinforcement learning

This chapter covers

- The difference between reinforcement learning with human feedback (RLHF) and reinforcement learning with verifiable rewards (RLVR)

- Training reasoning LLMs as a reinforcement learning problem with task-correctness rewards

- Sampling multiple responses per prompt to compute group-relative learning signals

- Updating the LLM weights using group-based policy optimization for improved reasoning

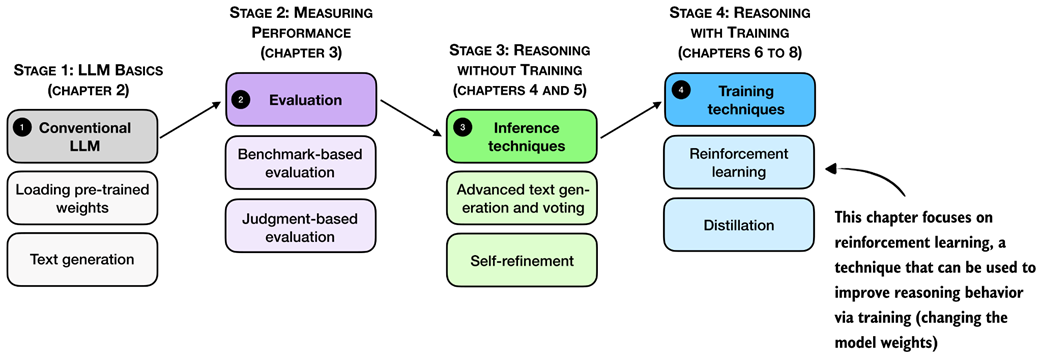

Reasoning performance and answer accuracy can be improved by both increasing the inference compute budget and by specific model training methods. This chapter, as shown in figure 6.1, focuses on reinforcement learning, which is the most commonly used training method for reasoning models.

Figure 6.1 A mental model of the topics covered in this book. This chapter focuses on techniques that improve reasoning with additional training (stage 4). Specifically, this chapter covers reinforcement learning.

The next section provides a general introduction to reinforcement learning in the context of LLMs before discussing the two common reinforcement learning approaches used for LLMs.