chapter eight

8 Distilling reasoning models for efficient reasoning

This chapter covers

- Hard and soft distillation for reasoning models

- Creating and preparing a teacher-generated reasoning dataset

- Training and evaluating a distilled student model using cross-entropy loss

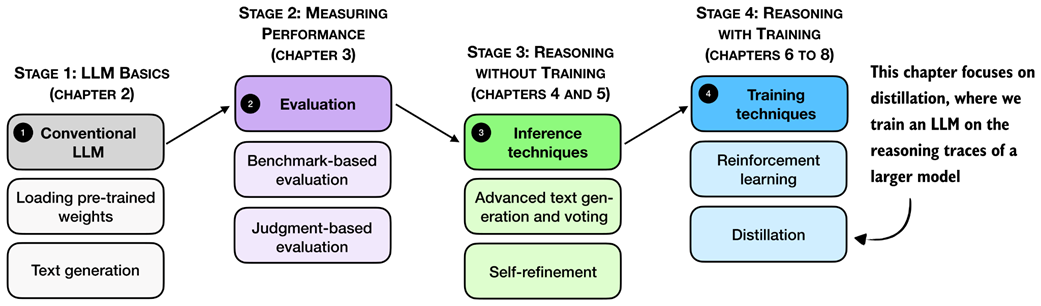

Reasoning performance can be improved not only through inference-time scaling and reinforcement learning, but also through distillation. In distillation, a smaller student model is trained on reasoning traces and answers generated by a larger teacher model. As shown in the overview in figure 8.1, this chapter focuses on this training-time technique.

Figure 8.1 A mental model of the topics covered in this book. This chapter focuses on distillation, where a smaller student model is trained on reasoning traces generated by a larger teacher model.

First, we’ll take a look at a general introduction to model distillation before discussing the individual steps shown in figure 8.1 in more detail.

8.1 Introduction to model distillation for reasoning tasks

Model distillation means training a smaller LLM, the student, on outputs produced by a larger LLM, the teacher. For reasoning models, these outputs usually include not only the final answer but also the intermediate reasoning trace that leads to it.