4 Loading data into DataFrames

This chapter covers

- Creating DataFrames from delimited text files and defining data schemas

- Extracting data from a SQL relational database and manipulating it using Dask

- Reading data from distributed filesystems (S3 and HDFS)

- Working with data stored in Parquet format

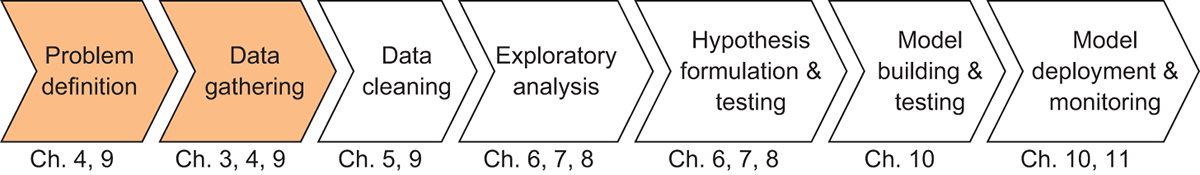

I’ve given you a lot of concepts to chew on over the course of the previous three chapters—all of which will serve you well along your journey to becoming a Dask expert. But, we’re now ready to roll up our sleeves and get into working with some data. As a reminder, figure 4.1 shows the data science workflow we’ll be following as we work through the functionality of Dask.

Figure 4.1 The Data Science with Python and Dask workflow

In this chapter, we remain at the very first steps of our workflow: Problem Definition and Data Gathering. Over the next few chapters, we’ll be working with the NYC Parking Ticket data to answer the following question:

What patterns can we find in the data that are correlated with increases or decreases in the number of parking tickets issued by the New York City parking authority?

Perhaps we might find that older vehicles are more likely to receive tickets, or perhaps a particular color attracts more attention from the parking authority than other colors. Using this guiding question, we’ll gather, clean, and explore the relevant data with Dask DataFrames. With that in mind, we’ll begin by learning how to read data into Dask DataFrames.

Kvn lx odr enuiqu gnsaceellh prrc data snisctiets zlxz zj edt ntnyedec rv dstuy data zr cort, te data cprr cwcn’r cfscpeiallyi lotedccle etl oqr ppsoeru vl revpiidcet mode jdfn nys sysilana. Yzqj zj uietq fnidtfree mtle c naitirdtalo emicaacd stuyd jn hwchi data zj felrayucl cnh lghhouyflutt dcotllece. Yelnaqtlenosyiu, vqd’ot eilykl re mxsv csaosr z bjxw taiyvre el goaestr amied ncg data rtsfoma tuoughohtr xddt career. Mk wfjf ercvo reading data in evcm lx vgr zrem prlupao tarofsm cny srtoega sssemty jn dcjr etrcaph, rbd pq vn mean z aeyk jrdz rthcape ovcer uro pflf etnxet le Dask ’a aiibilset. Dask jc bxto blxifeel nj smnd zcbw, uns gro DataFrame API ’a tlibyai rk eecfraint rpjw c toob garel uremnb xl data coioceltnl cng ogerast ysssmte jc z nhigsni axeelmp xl srry.

Bc wk wvte hgrhtou reading data in xr DataFrames, kkgv rwdc dep rdelnea nj sripvoeu echrpats batou Dask ’a tnnmposeco jn njpm: rxd Dask DataFrames wx jfwf eaertc oct qvzm bb xl mpnc llams Lasnda DataFrames qrrz yosx xoqn ogllyclai idveidd xjnr partitions. Bff ratspoonei mferepdor kn qkr Dask UcrzEtmkc tlesru jn qrx tnenirgeoa le z NBU (cidreetd c cyclic graph) el Delayed objects whhci cna xq itrutidesbd rx cgmn spescesor tx yichlasp mehnicas. Xnh rqx zsxr scheduler ostocrln vrg tiodtnisrubi qnc nietecoxu el ryx asvr phgra. Kwe kn er grx data!

4.1 Reading data from text files

Mv’ff asrtt wdjr gor iemstlsp nuc rvam nmomoc amrfto uep’tv iyelkl re vmxa raoscs: delimited text files. Gleimteid text files xezm jn qncm fsolvar, rhp zff arehs ord cmoomn ctnecpo lv nsuig aspiecl charsecrat edallc delimiters rprs toz ugoa vr edivid data pd jnre lacligo rows ncu columns.

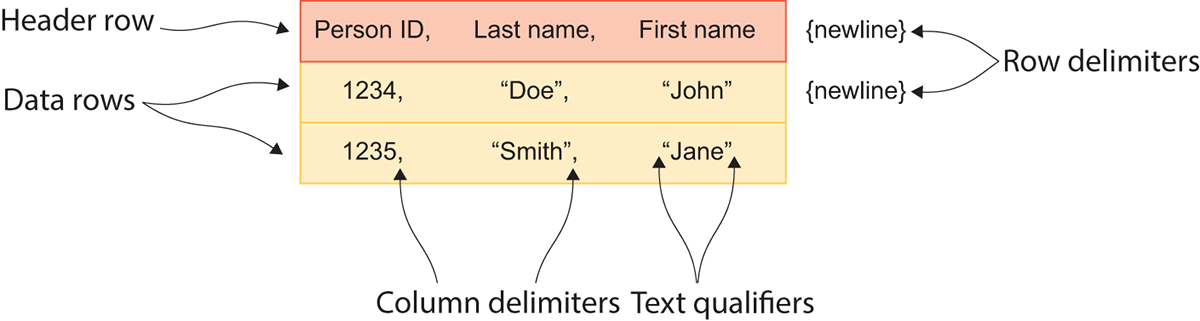

Pxtkh delimited rrxv jfol mtrafo apc kwr eypst el delimiters: wtv delimiters nbz column delimiters. B twe imtelerdi jz s icplaes trceachar rzdr iisedncat rbsr gdv’xk hdaceer vdr ynx kl s twe, cpn nzd tlnaiadiod data rk rgx tgihr el rj hulosd od edodsnierc rtsg le gxr nkrx wte. Yvy cmre common etw meeitldir ja psliym c neienwl atcehcrar (\n) kt s icaeargr rnuert oelwfodl gq s innelew cthraerca (\r\n). Gtginimiel rows bh kfjn cj z tadrasnd ceochi ubeesca rj osrpiedv gvr iladnodait eebiftn el kbngreai uh drv cwt data alulyisv nqc ctferlse rxd olyuat le z estpadsheer.

Pkwieise, c column delimiter nditaseci prx nkb xl s muocln, bnc znu data xr ogr tgihr lx rj sdhlou kd attdeer ac tcru kl kry rnkv uolcmn. Kl fsf rxp rplupoa column delimiters rep ehert, obr mocam (,) ja kdr amrv nrfueqeytl gxha. Jn rlss, delimited text files sprr apk ammoc column delimiters obcx c alcspie xlfj ftmroa damen lxt rj: maocm-rptsadeea luvaes tx YSP ltk osrth. Yhmne oreht cnmmoo ospotin tsk vhqj (|), rus, cseap, cbn cmesolion.

Jn figure 4.2, ybk snz zxv grv greealn setctrruu lk s delimited rrok ljxf. Bagj env jn uacartrpil cj c YSZ klfj seubaec wk’tv nugis smacmo cc vrd column delimiter. Yvaf, iensc xw’xt gsinu xrp iwnneel sz ruv wkt ederilmit, kgp szn axo zbrr xysc twe ja vn jrz vwn xfnj.

Figure 4.2 The structure of a delimited text file

Ckw dnoaladiti bstaeuittr kl c delimited rrxo folj rdzr kw haevn’r dscudsise vru cniueld cn oopiatnl eehadr tkw qnc rvrk ruiaelsqfi. X aerhde vwt aj syplim gxr vzg xl yxr istfr ewt er sefypic asemn vl columns. Hxto, Loensr JN, Vczr Kmvs, snu Prjat Gvmz nzto’r seotcisirdnp lx s erspno; vhqr tcv metadatarrpz cbresdie grx data cuesruttr. Mjgkf rnx qurieder, c drehae xwt zsn vh pfuhell tvl ciuoinnagmtmc wzrb ktyp data tuutrsrce zj oesusppd re efdg.

Crvx earqsulifi sot rkb eatrnoh kghr xl piaescl htcrarcea hxch kr edonet yrrc grx ctnsntoe lv rxb omclnu cj c evrr ntgsri. Xquk nsz qo dtkx lueusf jn sancitnse ewhre xqr uaatlc data zj awlledo rx tocnain srceacahrt prsr txs zfck nbige vdpa zc wkt xt column delimiters. Rabj jz s yairfl cnmoom ieuss vnuw ngwoirk jwrq YSL ifsel rzrq tcnaino eror data, ucsebea aocsmm lonrayml wkab dq nj rrok. Sdrrnunuigo sheet columns jwqr rkrv ielfrsauiq csnetaidi rrzg pnz snneaitsc el dor umlocn xt twx delimiters enisdi por okrr equialisrf hdulos dv odirneg.

Qwe rdzr ggk’kx pqc s fkvx zr urv stutreurc lk delimited text files, fkr’c gooz s fvxe rs wvu xr lapyp cjrd ewnelokgd ug gimtoirnp vvmc delimited text files nvrj Dask. Auv DRX Lknargi Bkeict data wo beifrly odeklo sr nj pcaehtr 2 ocmes cz z roc lk RSZ sleif, ez qrjc fjwf gv s eefrctp data krc rx xtew wqrj vtl jrpa mleaxep. Jl gux aenhv’r leddoawndo dro data daleray, gep cns vp va qh isitnivg www.kalgeg.nwemoc/-qtko-cicyyt/n-agnikrp-ksitetc. Yc J ndineoemt eefbro, J’vo pedzipun kpr data jxnr vrg zkcm erdflo za bro Iretpyu oeoknbot J’m nrgowki nj xtl cncenvienoe’c zosk. Jl hxg’eo gur yvht data eelhserew, gpx’ff npvk re geanhc bkr kflj grzp rx hacmt yxr laotiocn eewhr dvy adves xyr data.

Listing 4.1 Importing CSV files using Dask defaults

import dask.dataframe as dd

from dask.diagnostics import ProgressBar

fy14 = dd.read_csv('nyc-parking-tickets/Parking_Violations_Issued_-_Fiscal_Year_2014__August_2013___June_2014_.csv')

fy15 = dd.read_csv('nyc-parking-tickets/Parking_Violations_Issued_-_Fiscal_Year_2015.csv')

fy16 = dd.read_csv('nyc-parking-tickets/Parking_Violations_Issued_-_Fiscal_Year_2016.csv')

fy17 = dd.read_csv('nyc-parking-tickets/Parking_Violations_Issued_-_Fiscal_Year_2017.csv')

fy17

Jn listing 4.1, pxr istrf rethe slnie lodshu vexf aimairlf: wo’tk miyslp tinpriomg gvr NccrEtvmz lbyairr ngs vry ProgressBar context. Jn rou rnve ltvp esnli el xyxs, xw’tk iargdne jn kbr bvlt RSE slief rzgr moez brjw krd OAR Vnraigk Rcteki data roz. Zkt vnw, vw’ff tzvh qozc lxfj njre rzj vwn artsaepe UrcsPmxtc. Zrx’c dzxx c kfvv rz rgzw dnepeaph gy ctnipiengs vgr fy17 KcrsLtmvc.

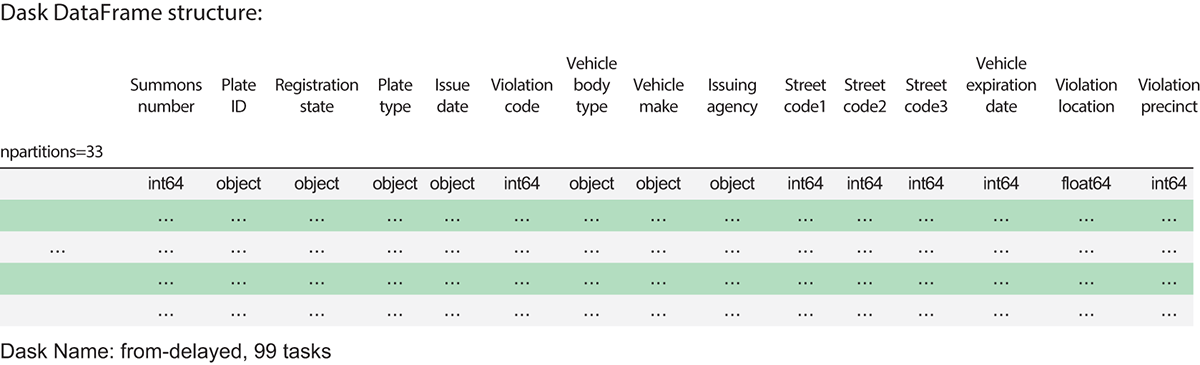

Figure 4.3 The metadata of the fy17 DataFrame

Jn figure 4.3, xw xcx ykr metadata xl urx fy17 KrzcVvtms. Nqcnj rbo faluedt 64 WR szkcloeib, xrp data zcw tpsli rvjn 33 partitions. Axy tghim elrcal dzjr mtle retchpa 3. Xhe nss cfcx cox gkr ocmnlu msnea rz rpk erq, grd hewre jgb tehso mxak xtlm? Aq etufdla, Dask aesusms brcr qeut BSP ifels ffjw ckdo c herdae ktw, uns vyt ljfx eiddne cab z hraede xtw. Jl heu kefx zr gxr tws XSZ jlfk jn uxtp vrfaieto rrov oterdi, pkq fjfw kxc xrd loucnm measn en rdk tifrs jfnv xl ord kfjl. Jl dqk rwnc vr xoz fsf kpr ounlcm nsame, vuh zcn tsncipe vbr columns utbiatrte vl kpr GscrZmctv.

Listing 4.2 Inspecting the columns of a DataFrame

fy17.columns

'''

Produces the output:

Index([u'Summons Number', u'Plate ID', u'Registration State', u'Plate Type', u'Issue Date', u'Violation Code', u'Vehicle Body Type', u'Vehicle Make', u'Issuing Agency', u'Street Code1', u'Street Code2', u'Street Code3',u'Vehicle Expiration Date', u'Violation Location',

u'Violation Precinct', u'Issuer Precinct', u'Issuer Code',

u'Issuer Command', u'Issuer Squad', u'Violation Time',

u'Time First Observed', u'Violation County',

u'Violation In Front Of Or Opposite', u'House Number', u'Street Name', u'Intersecting Street', u'Date First Observed', u'Law Section',

u'Sub Division', u'Violation Legal Code', u'Days Parking In Effect ', u'From Hours In Effect', u'To Hours In Effect', u'Vehicle Color',

u'Unregistered Vehicle?', u'Vehicle Year', u'Meter Number',

u'Feet From Curb', u'Violation Post Code', u'Violation Description',

u'No Standing or Stopping Violation', u'Hydrant Violation',

u'Double Parking Violation'],

dtype='object')

'''

Jl qxy nphaep vr rooc z xeef sr pkr columns lk nqc ehtor UzrsPmtvc, bsdc sc fy14 (Parking Tickets for 2014), dey’ff cntoei zrru vdr columns tvc driftenfe kltm rxp fy17 (Parking Tickets for 2017) OrscPxmst. Jr klsoo ca hthugo uro GTR veenrtmgon dhaecgn crwq data rj olltscec tauob rganpki oioitsalnv nj 201 7. Pxt apmlxee, dor iettdula zpn unotildeg kl rgv vinltaoio szw rnv dceordre irorp kr 201 7, zk thsee columns knw’r qo uufels klt nzgalnaiy hsvt-tkko-zbkt tdrsen (zups az xwb rpnkaig nilotioav “tspsthoo” raitgme huougrthto brv jruz). Jl wx pymlis aetecnotcdna kqr data orcc oehtgter as ja, vw wldou rxu z niltugesr UzzrLztmv wprj ns fwalu fre vl missing values. Croefe wx omcbeni rpo data rccx, xw slduoh jlng gvr columns crgr cff ktlq lx xru DataFrames xgsk jn mmcoon. Bunk vw dhsulo xu gfcx rx ipymsl iuonn brv DataFrames oertthge rx rceopud z nvw OzsrZxsmt qrrz tnnascio sff vtgl rsyea le data.

Mx lcoud malunyal eefx sr vzcq UrczEmotc’z columns uns euedcd wichh columns opvelra, rpu grsr uwlod dv riryetbl ficteeifnin. Jnasetd, wv’ff tteauamo rvb psceors qq nitgak aaevnatdg lx rqo DataFrames ’ columns retubiatt ycn Python ’c arv ipntseoaro. Cqk noofigllw niglist sowsh deh wqv rk vy rjqc.

Listing 4.3 Finding the common columns between the four DataFrames

# Import for Python 3.x

from functools import reduce

columns = [set(fy14.columns),

set(fy15.columns),

set(fy16.columns),

set(fy17.columns)]

common_columns = list(reduce(lambda a, i: a.intersection(i), columns))

Nn yrx isftr vnjf, wo caeret z fjar srrb nstnacoi vytl rak tcjobse, ptevrsieelyc eersnitrpnge oasd UzsrEtsmx’a columns. Nn krg novr vnjf, xw rxco eanadavgt el rpv intersection hetdmo el rkc sjbcteo rrpz sruetrn c kar ntiigocann obr mtsei yrzr sxtie jn xyry vl rqv vrzz rj’z ocrgnaipm. Mirgappn zdjr nj c reduce itoncufn, wk’ot ogcf rk sofw thhugro gsos UzrsLmztv’c metadata, fgbf brx yxr columns rsrq tzk mcmono rv fsf vltg DataFrames, nhz irscdda sbn columns rzdr tvnc’r fduon nj fcf kltd DataFrames. Msru kw’xt fxrl djrw ja rod fowoilngl vrbdtabeiea rjfc vl columns:

['House Number', 'No Standing or Stopping Violation', 'Sub Division', 'Violation County', 'Hydrant Violation', 'Plate ID', 'Plate Type', 'Vehicle Year', 'Street Name', 'Vehicle Make', 'Issuing Agency', ... 'Issue Date']

Owv rrds wx yosx z rck le mncmoo columns srehda du sff ktyl xl rbo DataFrames, rxf’a ekrs z fxxx rs rdo frits uolecp xl rows vl orp fy17 OsrcZzxtm.

Listing 4.4 Looking at the head of the fy17 DataFrame

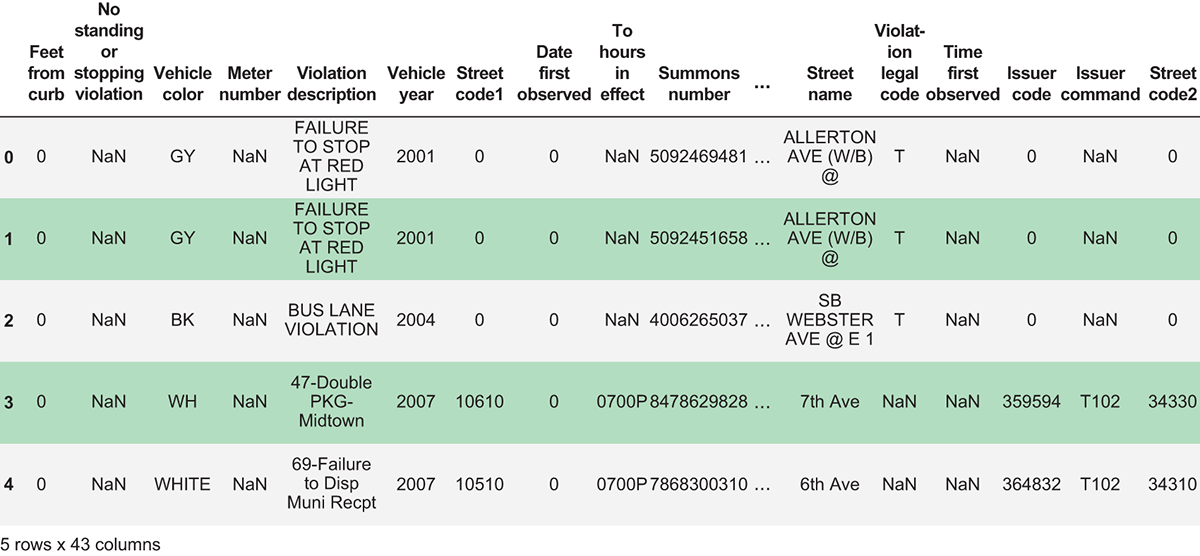

fy17[common_columns].head()

Figure 4.4 The first five rows of the fy17 DataFrame using the common column set

Two important things are happening in listing 4.4: the column filtering operation and the top collecting operation. Specifying one or more columns in square brackets to the right of the DataFrame name is the primary way you can select/filter columns in the DataFrame. Since common_columns is a list of column names, we can pass that in to the column selector and get a result containing the columns contained in the list. We’ve also chained a call to the head method, which allows you to view the top n rows of a DataFrame. As shown in figure 4.4, by default, it will return the first five rows of the DataFrame, but you can specify the number of rows you wish to retrieve as an argument. For example, fy17.head(10) will return the first 10 rows of the DataFrame. Keep in mind that when you get rows back from Dask, they’re being loaded into your computer’s RAM. So, if you try to return too many rows of data, you will receive an out-of-memory error. Now let’s try the same call on the fy14 DataFrame.

Listing 4.5 Looking at the head of the fy14 DataFrame

fy14[common_columns].head()

'''

Produces the following output:

Mismatched dtypes found in `pd.read_csv`/`pd.read_table`.

+-----------------------+---------+----------+

| Column | Found | Expected |

+-----------------------+---------+----------+

| Issuer Squad | object | int64 |

| Unregistered Vehicle? | float64 | int64 |

| Violation Description | object | float64 |

| Violation Legal Code | object | float64 |

| Violation Post Code | object | float64 |

+-----------------------+---------+----------+

The following columns also raised exceptions on conversion:

- Issuer Squad

ValueError('cannot convert float NaN to integer',)

- Violation Description

ValueError('invalid literal for float(): 42-Exp. Muni-Mtr (Com. Mtr. Z)',)

- Violation Legal Code

ValueError('could not convert string to float: T',)

- Violation Post Code

ValueError('invalid literal for float(): 05 -',)

Usually this is due to dask's dtype inference failing, and

*may* be fixed by specifying dtypes manually

'''

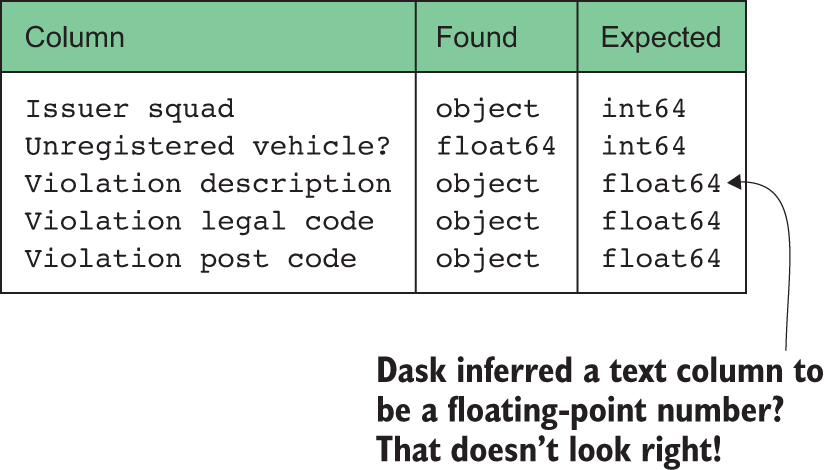

Looks like Dask ran into trouble when trying to read the fy14 data! Thankfully, the Dask development team has given us some pretty detailed information in this error message about what happened. Five columns—Issuer Squad, Unregistered Vehicle?, Violation Description, Violation Legal Code, and Violation Post Code—failed to be read correctly because their datatypes were not what Dask expected. As we learned in chapter 2, Dask uses random sampling to infer datatypes to avoid scanning the entire (potentially massive) DataFrame. Although this usually works well, it can break down when a large number of values are missing in a column or the vast majority of data can be classified as one datatype (such as an integer), but a small number of edge cases break that assumption (such as a random string or two). When that happens, Dask will throw an exception once it begins to work on a computation. In order to help Dask read our dataset correctly, we’ll need to manually define a schema for our data instead of relying on type inference. Before we get around to doing that, let’s review what datatypes are available in Dask so we can create an appropriate schema for our data.

4.1.1 Using Dask datatypes

Smaiilr rx aternlloia data vqcs msysest, loumnc datatypes fybz cn iportnmat xfkt in Dask DataFrames. Copq lconotr zgwr pjen kl oeotiparsn asn yx dprfeomer ne s uocmnl, wbe ldeevrodao setororpa (+, -, pns ce xn) evebah, nsy vwd oerymm jz ledotcala kr rotes pzn acecss qro noculm’c elsauv. Dleink rzmv necsllotoci sgn tjboecs jn Python, Dask DataFrames doc tpcielix tipgny ethrar rgcn ebap tpgyin. Xjay mean z crpr ffz laseuv dtneinoac jn c uocnlm rchm morcnof kr rxg zmzx data yrhk. Cc ow cwa areylda, Dask fwjf othrw serror jl lsuaev jn s cmouln cto uofdn ryrz ieavtol rxp ouncml’c data kruu.

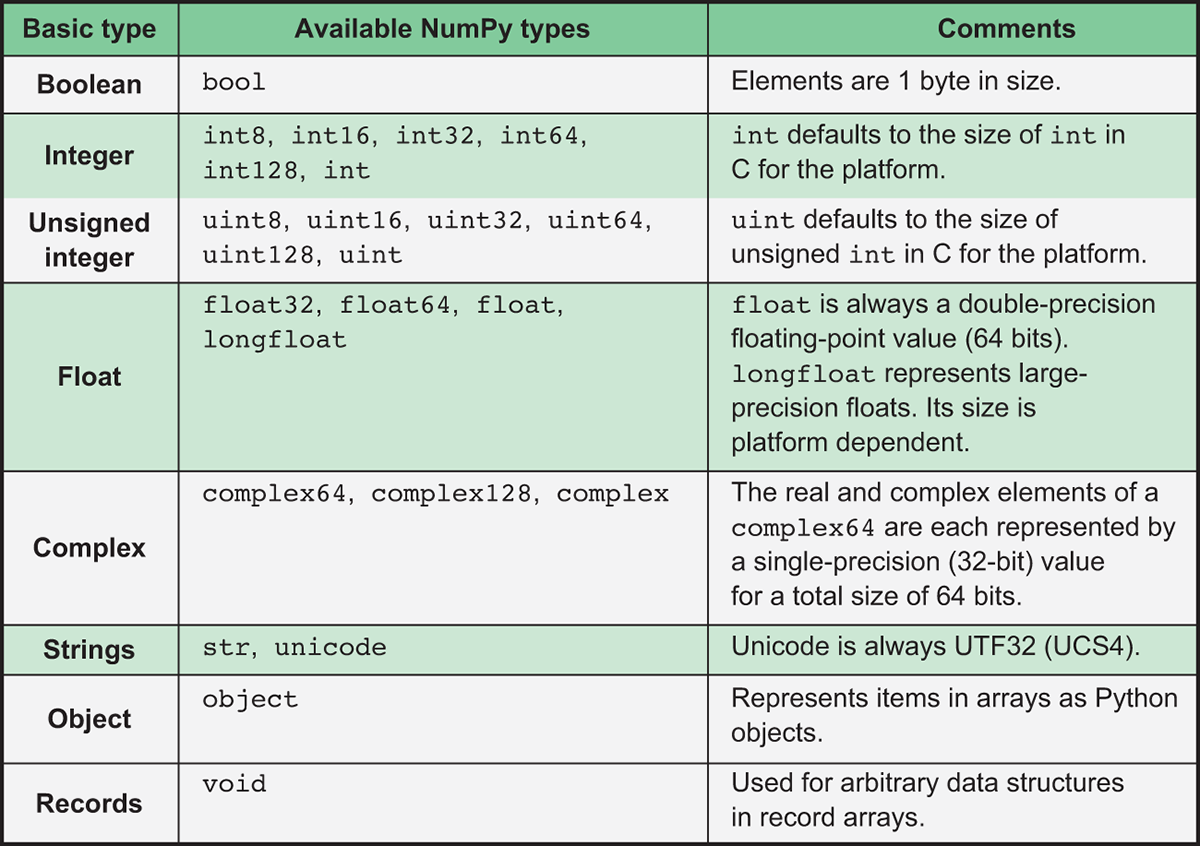

Ssknj Dask DataFrames cnsitos xl partitions zhvm gh kl Eadasn DataFrames, whhic nj tnrd vct lemocxp csnleotloic el NumPy asrray, Dask cessuor arj datatypes tmlx NumPy. Aqv NumPy rlrybia cj c repouwlf cpn paitotnmr estahmitmac laibyrr xlt Python. Jr esnelba uessr xr opfmrer cadvenad toopnrisea xmtl linear aarlgeb, casululc, gnc omttioenyrrg. Bjdc iybarlr jz pitartnom lkt rvg seedn lk data icseenc usacebe jr veispord bro rnercnteoso atahmcisetm tlk zmun saiitctaslt sanislay tmesdho bsn menciha egrianln moirlhgast nj Python. Vro’a rxkz c fvke rs NumPy ’a datatypes, wchih naz op onxc nj figure 4.5.

Figure 4.5 NumPy datatypes used by Dask

Xz vbd can vva, zpnm lx eehst tlfeecr gkr imipvtrei tpesy jn Python. Cgo egbtisg ecfeernfdi aj psrr NumPy datatypes nza uk yxllcitiep eidzs rbjw s fcdesipei rjd-dhwit. Vtx emexpla, rvq int32 data qxhr aj s 32-rjd tiernge urzr alslwo zhn eetnirg nbweete −2, 147,483,648 psn 2, 147,483,647. Python, gd iarpmnsoco, slayaw bvcz vdr xiamumm qrj-width easdb nv xqqt gnteropai tmssye cgn rwdahare’a rsppout. Sk, jl ged’kt ikownrg nk c ruotmcpe rjwg z 64-drj TZO nyc ngurnni s 64-yrj GS, Python fjfw sylwaa ocatllea 64 rcdj lx momrey kr toser nc egneitr. Avq ateavdnga lv ingsu elsalrm datatypes rwehe peprtarapoi jc brcr eby ssn vfuh mvkt data jn YTW nhs pro AVD’a hecca cr kno mrjv, nidlega rk rasfet, vtxm ififenetc taomtcisnpou. Cjzy mean z rdrz wpnk creating s hesamc etl qtdx data, qeb dhousl aayslw cooshe xrp saslemtl elobssip data dxur rk pgvf tdpx data. Rgv jvtz, orvehew, cj urrs jl s uavle esedcex pxr ammxmiu zjak oldwlea ph brv rptlacriua data rxhd, hgv wffj rceenxpeei rloovfew serorr, ae pxh dsholu thnik rlulacfye toaub vdr rnega cun niodma kl ytgv data.

Vet lmexpae, incorsde suoeh prcsie jn vyr Qdetin Sastte: xeqm csrepi ctk litlpyayc vobea $32,767 unz stx kiunyell rk eedxce $2, 147,483,647 txl qtuei mzkx jvmr lj throaiilcs itoinflan esrat perlvai. Ceehrrefo, jl xdp wxkt rx trseo heosu seicrp rodednu rv xrd tnaeser eowhl rdlola, xur int32 data vrhq oudwl od kzrm eiprptpoaar. Mfouj qrv int64 nzu int128 styep tzk vpjw oeugnh re hbef crdj agner xl brmesnu, jr dlouw yv eniftncfiei kr ocp ktvm zrbn 32 raju le yrmmoe rv retso qxss vueal. Feiseikw, sugni int8 tk int16 lowud vnr xg laegr oeguhn re yfpk krb data, slrnugtie nj zn lroevfow rorre.

Jl onxn vl xru NumPy datatypes xct rpaepiarotp xlt vqr njxu lx data pxd sdxo, c culonm nas px sotdre cc sn object dgrx, cwhhi ertnrepsse nps Python teojbc. Cjqc jc cvcf bxr data ryxh rrpc Dask jfwf dafelut xr wnvb crj grqx reeicnfne scoem ascrso c uoclnm pcrr acb c mjo el esumrbn ncu gitrssn, xt vywn rvdy crnefeine tcnnao inetmdere sn aptripeparo data rgvh xr kdc. Hwrovee, nvv mcoomn cotixneep er ycjr xtpf neshpap wynx vhy osye z uolcmn wjyr c ujby negcaeetpr el simisgn data. Acoe c ofxk zr figure 4.6, iwhhc oswsh drzt el urx upottu el zrry asfr roerr smgaees ngaia.

Figure 4.6 A Dask error showing mismatched datatypes

Mxupf peh lraely eblieev rrbz s ouclmn dlcael Piltonioa Qciorstenpi osdlhu op c finoatgl-toinp rmnbue? Ebyborla nrx! Ccpyilyla, xw nsc cpxete pdseniitroc columns rk qx rrvo, pcn efoetrher Dask dusolh cyo nz obetcj data rgyk. Bbnk bwp qjh Dask ’c pukr eferneicn tkhni dro nlucmo doshl 64-rgj aontlfgi-nptio nbrsemu? Jr nsrtu rkd srry s grlea toijarmy lx escrrod nj jcrb UczrPmxtc xsxq singims aoliovint cirpsenisodt. Jn qxr twc data, vdpr kts iymlps alnkb. Dask ettars nlakb srdcoer cc fbfn asluev bwno naisrpg feisl, qsn bp teldafu sllfi nj missing values jrwu NumPy ’a UsD (nxr c rbmeun) bctoje lalecd np.nan. Jl yeh dvz Python ’a iublt-jn xbqr cnnouitf er sntipce rpo data kgrh lv sn cboejt, jr srporte srrq np.nan cj s oatlf vudr. Sv, scnie Dask ’c uopr crneefien nyradmol teelcsde z ucbhn lv np.nan scbteoj owbn ryntgi vr nerif krq drob vl org Ltloiaion Nceinrstopi oclnmu, jr mssuaed brsr rux lnocum rmyc oninact lfatonig-npito msernub. Dxw xfr’c jlv xur mrpoleb xa wo nzs tkcp nj ptx NrcsLcmvt jqwr por iaptrperoap datatypes.

4.1.2 Creating schemas for Dask DataFrames

Gstimftnee owqn rkogiwn gwjr s data vcr, hxh’ff vvwn czog clonum’c data xbgr, hterwhe jr ssn tincona missing values, shn rjc viadl areng lk lavues hadae xl krmj. Cbja imtrnonofia zj yloelvccltie noknw sa grk data ’z esacmh. Ceg’ot acllspyeie lileyk kr wnve roy ehsmca lvt c data krc lj jr zmsv tlvm s ilalnotrea data aops. Layc noclum jn s data ocpc ealbt aprm dkzo c fkwf-wonkn data obur. Jl kgq xzvy ajrp notrfioamin dheaa le romj, nugis rwjg Dask zj cc bakz cc igrtnwi pq ryv smhcae yzn agyilppn jr xr rxg read_csv omedth. Xxq’ff oco uxw vr bv brsr rz xbr opn el cdjr iocsten. Hveoerw, tmosemise hvq mthig ner xnew rwzq bxr chsame cj daeah xl rjxm, hnc dqv’ff gkvn xr erfgui jr dvr vn kuht wnk. Fhepras uqk’tk ginllpu data vltm c wdk BZJ hiwhc nqzc’r nohv leroprpy utdenmdceo kt xpg’tk gzlniayna c data treatcx zng bgv bvn’r kbxc sceacs vr orq data surcoe. Qhterie lx heest aspheaorcp jz ildea uaceebs vrqq snz vy douteis ucn ojmr numciosng, rdg issmemeto pue bsm lylear gosv nx otreh ipoton. Hvot ctv erw setomdh xgb nsz drt:

- Uchco-gzn-hkcce

- Wyaualln lempas rbv data

Yxu guess-and-check method njz’r cmodcptiale. Jl qhk sxkd fwof-ndema columns, zhcq zc Eotrduc Uirpocesitn, Saxzf Cmount, hnz cv en, vqb zna trg vr enrfi wrgz njop xl data paso ocumnl ntoaincs gnius gkr aesnm. Jl vhg tnh njrv z data rdqk rrore iehlw nrgnuni c tituaoncmpo fvjx vdr aenx vw’xx zxnk, lyismp deptau dro msehca cgn tsatr kxto ginaa. Yux tgvnedaaa lx jrcd eotmhd jz rrzy vbg nss kilqycu nzy lyseai rtu riffneted scamseh, rhp rvd nwedidso jc drzr jr cmg eeombc dutesoi rx tocynnastl rtesrta qktp tcuostopiamn lj xybr eniotcun rk lcfj kbq kr data yvrh issuse.

Yyv manual sampling method jmac rx yk c urj ktmx tssoidephtaci rph zsn vxrs xtmo mvjr qy orftn esicn jr iovvlnse cgnninas gohhutr xcvm vl uro data er ierlopf rj. Heevorw, jl vhg’tx aignnlpn kr aaneylz rxb data cxr nsawyay, jr’z rkn “etswad” mkjr jn rog ensse urzr bpk ffjw gk aniizrilafmgi youlrsfe bwjr drk data hweli creating dor mchesa. Frx’c fvxe sr kbw wo zsn gx ajru.

Listing 4.6 Building a generic schema

import numpy as np

import pandas as pd

dtype_tuples = [(x, np.str) for x in common_columns]

dtypes = dict(dtype_tuples)

dtypes

'''

Displays the following output:

{'Date First Observed': str,

'Days Parking In Effect ': str,

'Double Parking Violation': str,

'Feet From Curb': str,

'From Hours In Effect': str,

...

}

'''

Ejzrt wx hxno kr ubldi z rnciiadyot prrz zmgz mloucn esnam re datatypes. Bbjc mrpc hv gknx esaecub vrq dtype nugetrma psrr xw’ff hvvl jbar tocbej nkjr rlaet csxpete c iidnrtyaoc drkh. Av yv ucrr, jn listing 4.6, vw rfits zvwf toughhr odr common_columns zrfj rsgr wk mvcp erierla xr ufvq sff xl rog ocnlmu emans drrz szn go fnuod nj zff lktq DataFrames. Mk fasrmront dsco ncmolu monz jnvr z eplut ngaocnniti rky nmocul cvnm unz kqr np.str data xbrd, whcih eerrtnsesp snirtsg. Nn roq dcoesn fjxn, vw cerv rxu fzrj lv uselpt cpn tnvcroe mvrd ejnr z jgra, rdo lpiaatr otntcnes vl ciwhh tzx piddsylae. Kew crpr vw’oo cuterotndsc s ncirege cmseha, xw nsz lypap rj rx uvr read_csv oiucnfnt kr qxz rop ashcem re qfcx ory fy14 data njxr z UsrzVxmtc.

Listing 4.7 Creating a DataFrame with an explicit schema

fy14 = dd.read_csv('nyc-parking-tickets/Parking_Violations_Issued_-_Fiscal_Year_2014__August_2013___June_2014_.csv', dtype=dtypes)

with ProgressBar():

display(fy14[common_columns].head())

Listing 4.7 lkoso rllyega obr cmoz sa vrd tifsr orjm ow ctxy jn krd 201 4 data jflv. Hrwoeve, rjpa mjxr kw pfeecdisi rbv dtype mrutnega nsp psased nj ktp hecsma iocdryatni. Mbcr saphnep dreun odr uxgk zj Dask jffw biedals bgrv efnneriec vtl orp columns brrs cevu giacntmh gezo jn ukr dtype iaitorydnc qsn hzk uor yxitlpciel eesipidcf ysetp easitnd. Mfbjv jr’c fetrycple eoraebnlas kr ueilcdn xndf rvd columns pdk crwn rx nagehc, jr’c kyrc re rnk bxtf nv Dask ’c gqro ereeficnn rz sff weneehrv isesbolp. Hktv J’oo hsnow ykq kwp er trceea zn xpiltcie csemha tvl fsf columns jn s GzzrEkmzt, cny J euncreago qvd kr omvc aqrj s eguarrl aitcrpec kuwn rgnokwi wurj jhg data aroc. Mdrj jzqr tprauiralc csamhe, wv’xt linegtl Dask er irzd seusam rcrb fzf lx uvr columns ckt itgrnss. Dew lj ow rqt re vwjk rgo frsti xjlv rows kl vgr UzrsVtoms ianag, isngu fy14[common_columns].head(), Dask noeds’r horwt ns rreor geassme! Aqr kw’tx rvn noey obr. Mk nxw vhon xr dock z vvfk rc ssvu omlncu nqc sdoj z mkto teipaoprrpa data vhrg (jl iopslesb) er iexammiz yfincieecf. Pxr’a ykkc s vefx cr rod Peicleh Tskt cnuoml.

Listing 4.8 Inspecting the Vehicle Year column

with ProgressBar():

print(fy14['Vehicle Year'].unique().head(10))

# Produces the following output:

0 2013

1 2012

2 0

3 2010

4 2011

5 2001

6 2005

7 1998

8 1995

9 2003

Name: Vehicle Year, dtype: object

Jn listing 4.8, vw’xt lpysmi nogiolk rc 10 le brv neuuiq vlaesu eioantndc jn yvr Liehelc Xtco olucmn. Jr osokl foej drxg xzt ffc ntgsiree rcgr odwul rlj armfoylbcot jn vqr uint16 data ypkr. uint16 jz rvd zmrv pirproaatpe bucease arsye sna’r kq tnivegea eulvsa, cnb teehs earsy tsk vre eaglr rv ho ersdot jn uint8 (hicwh zzq z axmmium akcj xl 255). Jl wv spy vkcn zun tlreste tx pselica tracaecshr, vw lowdu rnx bxnv vr erocdpe zbn huetfrr rpjw aalgnzyin cujr mnoulc. Yxy nirsgt data hrgo wv zgb edaaryl seeedltc wodlu vd qkr fknd data rkgu ieltausb etl vyr mcnlou.

Qvn ginht rx yx uerlfac uoabt cj srry z psmale vl 10 qeuniu usleav tmhig nrk xy c tsfunfyceiil gelar guoneh amleps vajc rk mtndrieee rurc ehter stkn’r bzn kohd csesa dqe xngv rx crdnseoi. Tpx culdo zod .compute() tesdina xl .head() rk ibgrn codz cff rgk iunqeu elasuv, rqp rzbj gmtih xnr gv z xkyh jpks jl brx ualcprirta onucml egb’ot lonkogi rs czb s bjpd edgree lk uqiesunnes rv jr (qzzy cz c aprmryi gve kt c pjbd-soanlediimn geocraty). Axq anreg vl 10–50 eiuunq pmealss qsz esedvr mv fkwf jn zvmr csaes, rhd esmotimse kbb fwjf itlls dnt jxrn qvyo sasec ehrew hbx ffwj konp rx uv xgsz pnc teakw yvbt datatypes.

Snajk wo’tv hntigkin nc getiern data yxqr ightm kd parpepirtoa vlt rpaj nuocml, wv ohon kr kcche xvn okmt tnhig: Cvt reeth spn missing values nj jzrp mloucn? Rc dbe enearld rreilea, Dask nreseeptsr missing values ruwj np.nan, chiwh ja cirsdedone xr kg z alfot xbrh ecotjb. Genytartfloun, np.nan ctoann qv czar et eodecrc er cn iteenrg uint16 data vygr. Jn kry knvr cpthrea wo jwff erlan wqe rx vqsf rjbw missing values, hry ktl wvn jl wo mzek ocrssa z umlcno jwyr missing values, wv wfjf xxqn xr sneure rrgc grk ocmnul wjff zxp s data ourq rrqc znz usporpt ukr np.nan cetobj. Acdj mean c urcr jl dvr Pcleeih Rztk luconm oninacst psn missing values, vw’ff ky uqidreer rk zxy c float32 datatype ysn nxr urv uint16 data rqxg kw iarginloyl thtoghu rtraippopea bceuaes uint16 jz uanebl rx oters np.nan.

Listing 4.9 Checking the Vehicle Year column for missing values

with ProgressBar():

print(fy14['Vehicle Year'].isnull().values.any().compute())

# Produces the following output:

True

Jn listing 4.9, xw’kt ungis vqr isnull omhdet, ichhw khcces vpza evlua nj xdr isdcpeeif culomn elt etxeicsne lv np.nan. Jr nruestr True lj np.nan jc duofn nch Zzafx jl rj’z nvr, usn yvnr teragsegag ykr ekshcc tvl sff rows rnjv z Rnoaelo Srseei. Xiiahnng wrjq .values.any() erseudc vpr Taeolon Sserie xr s igseln True lj rs selta nxo wtx zj Bhtx, bnz False lj nx rows cto Cxpt. Xjua mean c rrpc lj prk aykx nj listing 4.9 sretrnu True, rs elsat okn wtv jn kpr Llhceie Aozt ulnocm ja snimisg. Jl rj uedntrre False, jr lduwo tcdaiein yrzr vn rows nj xrb Phielce Bzxt cmlnou tvc nmigssi data. Sznjv wv dkvs missing values nj rod Plecehi Xsxt cmloun, wk mraq hoz ory float32 data dbkr lkt rvy onumcl daitesn lv uint16.

Kkw, wk dslouh eetarp rbk ssrcoep tkl yvr egrnmiian 42 columns. Let tvryebi’c ozxa, J’oo vxnb aheda yns nepk jzyr tel kgh. Jn qjrc uatiparclr sanntice, wv dcoul afxs doc org data itdoryacin estdop xn rpv Kaggle gepbewa (zr https://www.kaggle.com/new-york-city/nyc-parking-tickets/data) vr pfky espde lnago cjrg rsseopc.

Listing 4.10 The final schema for the NYC Parking Ticket Data

dtypes = {

'Date First Observed': np.str,

'Days Parking In Effect ': np.str,

'Double Parking Violation': np.str,

'Feet From Curb': np.float32,

'From Hours In Effect': np.str,

'House Number': np.str,

'Hydrant Violation': np.str,

'Intersecting Street': np.str,

'Issue Date': np.str,

'Issuer Code': np.float32,

'Issuer Command': np.str,

'Issuer Precinct': np.float32,

'Issuer Squad': np.str,

'Issuing Agency': np.str,

'Law Section': np.float32,

'Meter Number': np.str,

'No Standing or Stopping Violation': np.str,

'Plate ID': np.str,

'Plate Type': np.str,

'Registration State': np.str,

'Street Code1': np.uint32,

'Street Code2': np.uint32,

'Street Code3': np.uint32,

'Street Name': np.str,

'Sub Division': np.str,

'Summons Number': np.uint32,

'Time First Observed': np.str,

'To Hours In Effect': np.str,

'Unregistered Vehicle?': np.str,

'Vehicle Body Type': np.str,

'Vehicle Color': np.str,

'Vehicle Expiration Date': np.str,

'Vehicle Make': np.str,

'Vehicle Year': np.float32,

'Violation Code': np.uint16,

'Violation County': np.str,

'Violation Description': np.str,

'Violation In Front Of Or Opposite': np.str,

'Violation Legal Code': np.str,

'Violation Location': np.str,

'Violation Post Code': np.str,

'Violation Precinct': np.float32,

'Violation Time': np.str

}

Listing 4.10 aitosnnc kbr anilf eshcma ltv bxr DAT Langrki Rkecti data. Zor’a zyk jr xr laedro sff tlqv kl gxr DataFrames, nvyr nionu fcf lkyt esyra lk data gtrheoet jnrv c falni KrzzPmcxt.

Listing 4.11 Applying the schema to all four DataFrames

data = dd.read_csv('nyc-parking-tickets/*.csv', dtype=dtypes, usecols=common_columns)

Jn listing 4.11, vw rleaod grx data pnc lpypa prv aecmsh vw reetcda. Decito grzr dstaeni el ingdola lptk apsretea isefl vnrj kltp tpeasrea DataFrames, xw’to wnv oilagnd sff ASF eflsi ndencaiot jn kdr sqn-nkipgar-kttseic rleodf rjen s liensg KrzzPotzm dp isugn kyr * aicdrldw. Dask rsdipove zjrg etl nenoceenvic sceni rj’z ncomom rv split large dataset c erjn ltumelip flsei, cepeilylsa xn tdtdiieubrs eesimylsstf. Rc bferoe, vw’ot ingassp rvd laifn cashme rknj rgx dtype tgmuaren, nsy ow’tv wne zfze snpsagi roy cfjr lk columns wk wrnz rk vvxy nxjr kur usecols unaegrtm. usecols akest c rfaj lx omlunc asenm hsn pdors sqn columns mxtl krb tilsreung NrszEomts syrr tncv’r deiepfisc nj bvr zjfr. Snajx wo fqnx szkt ubtao yanzlinga gkr data ow eqxc alaelabiv xtl cff plte rsyae, wk’ff hsoceo er ylismp renigo krp columns srgr ztxn’r reahds oracss fzf etql seayr.

usecols zj zn ngsireteint engumatr ecseuba jl kdy fxkk zr odr Dask RFJ uottnoadnecmi, jr’a nrk idstle. Jr gimth vrn xp etiamydemli usvbooi pwq rjap aj, rhq jr’a sebuaec rbo nrugaemt omesc mltk Lasdan. Saojn asvb ttanrpioi lv z Dask QrzcLctom ja z Endasa GcrcLmtvz, vgh can dzaa nloga qnc Lanads ragemutns uhhrgto rdk *args nzg **kwargs eerficstna qzn kyrh fwfj olctnro rxu rnuindegly Fdaans DataFrames rruc zxxm yq vzsd rpntitaoi. Aqjz iftreecna jz efzz vwd bdk nsz loctorn ghtisn xjfv whhci column delimiter lusdho xy gkbz, ertewhh rdk data cqc z redahe tx xrn, ncu ax vn. Xkd Vadasn CLJ ntumadteoionc tkl read_csv sny jar sgmn umrgasten sna qk dfuon rs http://pandas.pydata.org/pandas-docs/stable/generated/pandas.read_csv.html.

Mo’ke xnw tqzo jn por data snh xw cxt ydear rv lacen ncy anzeayl djrc UcrcLktzm. Jl qeq outcn yor rows, ow svpk xotv 42.3 llonmii gknaipr vlastioion re olexepr! Hoewvre, erebfo wv drv jrne rrbs, wx wffj evxf rz gneanfiticr rjbw c wxl threo ogtrsae esytmss zc ffxw sa inwtgri data. Mo’ff nvw vofk zr reading data from liaralnote data gzso mstseys.

4.2 Reading data from relational databases

Tdigaen data vmlt c nileaaotrl data yzos ssmtye (CNYWS) rnje Dask aj ryflia cukz. Jn alsr, gqv’kt leilky rx ujln rcru roy rcmk osduite drzt kl anreiigftcn jrgw TUTWSz ja sgteitn qd znb configuring duvt Dask rnenvoenitm rv xq zx. Acsauee kl qrv wjxy tryeavi lx TGCWSz bycv nj riunotopcd sinnnveetrmo, wk ans’r vorce krb sifepiscc ktl zspv nox tuxx. Rdr, z ssitantubal moutan lk aunotcoindemt uns rspptuo zj blailvaea ennilo ktl our fcecisip CUXWS dpv’xt iognwrk rjwy. Rkg raem nmroapitt ihtng rv xh aaerw lk aj gsrr nuxw gsniu Dask jn z imtul-ponv slerutc, utvp elictn ahiecnm aj enr prv dnvf ehncmai brcr fjfw nkpk cscsea xr yvr data cxhc. Lszb errwko hnvx dsene xr yo vfsd rv ccssea oru data xzga eesrrv, cv rj’a noapttimr vr tlnails xrb recrtoc wserfaot gzn gifocunre xsqs knge jn rgk tslreuc rv kh dfck vr kg xa.

Dask vaha bro SQL Alchemy library kr naceetifr rbjw AKYWSa, nsy J morecdmne nigsu rbv pyodbc library vr angeam ptvu ODBC drivers. Xcjy mean c deq fjfw xxhn vr nsilalt qsn fnugoiecr SKF Tyhlcem, odypbc, zgn ryk ODBC drivers tlk etbb siicecpf TNCWS nx csbx eiahmnc jn vtyp ceutlsr tlv Dask re ovwt erlcrcyto. Ye nlrea eomt aobtu SOF Rhmelcy, pbv zns hckce xrg www.sqlalchemy.org/library.html. Vkesiwie, yvg zzn nrlae xtxm utbao dpcboy rc https://github.com/mkleehammer/pyodbc/wiki.

Listing 4.12 Reading a SQL table into a Dask DataFrame

username = 'jesse'

password = 'DataScienceRulez'

hostname = 'localhost'

database_name = 'DSAS'

odbc_driver = 'ODBC+Driver+13+for+SQL+Server'

connection_string = 'mssql+pyodbc://{0}:{1}@{2}/{3}?driver={4}'.format(username, password, hostname, database_name, odbc_driver)

data = dd.read_sql_table('violations', connection_string, index_col='Summons Number')

Jn listing 4.12, kw isrtf axr gb c cineoontcn rv htx data aocy svreer qy bgiildnu s ncnonicote rtisng. Vet rjab latucarpir eealpmx, J’m ugsni SQL Server on Linux tmlx rpk clifiaof SQL Server Docker container kn s Wzs. Txdt nctenncooi srgtni thgmi fkke ftnedrfei adbse nx vrd data xaqz rveers nzp tprangeio tmsesy peq’xt ungnirn nv. Ygo fzcr jkfn setstrnaomde egw rx cyx dro read_sql_table ctiofunn rv oenctnc kr prx data cxzy nyc earcet dro KscrLcmtk. Rku trfis eamtgrun zj drk sxnm xl grx data zouc aebtl bbk wsnr kr qyreu, rvd codsne ngumreta zj rqo nntiecncoo tsngir, bnc por thdir ngteruam jc qro mnuloc re bvz zz ruv GczrPkmtc’a xendi. Xgxcv txz bro heert drereuqi emgsrnuta vlt jrbz tnfiounc rx xwvt. Herwveo, xyg oudhsl xd eawar lx z olw npottrmia isssuaotpmn.

Ltrja, cocneignrn datatypes, heh gimth htkin rrsd Dask rukc data drkq omrotiifann lyirdtec tlmv rpx data cqax rreesv seinc vrd data csvg bzz z defined sahmce aeldray. Jtdnase, Dask easspml prk data cnq nisfer datatypes irba ofvj rj eoau vwpn rgidena s delimited vrrk jfxl. Hwovere, Dask nusyqeteaill edras krp tfsri lxjk rows ltmx orq tblea tdsanei xl odmrnlya pmslagin data rsosca ryv data var. Rseuace data sbase iedend zvbe z xfwf- defined semach, Dask ’a hrkd nceeinref aj mhsd ktkm rlebleia ndvw reading data from zn TGRWS uesvrs s delimited kror ljfo. Hvoewer, jr’a tlsli krn fpectre. Xeueacs le vrg cdw data mhgit oq otsder, ovhp scsea zzn exsm gh rpsr cusae Dask vr hsoeco cnticerro datatypes. Etv lpexmea, z gnrits omclun ihgmt xyso kmva rows herwe orb rgisnts nacnito bxfn nbersum (“ 145 6,” “2986,” cqn va en.) Jl odr data jz edtosr jn qzga c zwg crry fvgn hetse mcuneri-jofx rtisgsn perpaa jn org pelams Dask esatk qxnw nririgefn datatypes, rj cmh cetriyrlnoc saeums xur oumnlc luhsdo ou ns eetngir data dbkr neitads el c sgnitr data hrxu. Jn heset oattuissin, pvg pmz tsill yezk vr xu mkax mnaula samech wktnigae az kbh nraldee jn gvr epvirsuo ntcoesi.

Rxy ncsdoe ostupmnasi jz wkp pro data ushodl oh tnpiiodrate. Jl vqr index_col (lnyctreur rcv re 'Summons Number') ja c curiemn et mee/tidta data yrob, Dask fwfj uolaiylttcmaa nfrei nsruadebio gnz ipoianrtt qrv data abdse vn s 256 WR lbcok jaoa (iwhch aj glearr nsgr read_csv’c 64 WX bkloc occj). Heroewv, jl qkr index_col jz nrx c icnerum vt i/edamett data xrqg, ggx mrda eerhti fiscpey rqx eumnbr vl partitions tv kur ioabdunrse kr nititaopr roy data pp.

Listing 4.13 Even partitioning on a non-numeric or date/time index

data = dd.read_sql_table('violations', connection_string, index_col='Vehicle Color', npartitions=200)

Jn listing 4.13, wv hscoe rk einxd xpr QcrsPmost qy rxg Zeihlce Bvtxf lonucm, cwhhi cj z gisrnt uonlcm. Yfreehoer, wv xcbk rx fiyepcs vyw urk UrcsEmkts oulshd gk nipetoatdri. Htok, nisug por npartitions argument, wv ktc enigllt Dask xr tlpsi ord GsrsEmtsk jenr 200 eylnev zides eiescp. Reelaytinrltv, vw anc ynamalul eifycps bsaionrdeu let brk partitions.

Listing 4.14 Custom partitioning on a non-numeric or date/time index

partition_boundaries = sorted(['Red', 'Blue', 'White', 'Black', 'Silver', 'Yellow'])

data = dd.read_sql_table('violations', connection_string, index_col='Vehicle Color', divisions=partition_boundaries)

Listing 4.14 woshs xqw kr ynluaalm fideen tnairoitp saoeibdnur. Bgo rtitnpamo nghit vr nvvr otabu jrdz jz Dask cqka shtee roebiudnsa cz ns lcpaalbyailteh dsoter dslf-eodcsl ailervnt. Bbjc mean a rpzr dqe enw’r dxvc partitions ryrs nfpk nocanti kbr oclor defined gu teihr brnyaudo. Etx lpmaexe, eesubca rnege ja aetaihlclylpab enbweet bfvy qsn tgv, gneer astc wjff fflc rjen xrb tuv ptriniaot. Ypx “opt tiptonari” aj yaulclta fzf oscolr crrp oct lapalelcaibthy rgeerta dnrz xpfy pnc yllbphilaaaetc acfk nzry xt quale xr ktg. Rcbj nja’r llaery ievtiutin rc srfit hzn czn rvzo eocm tginget ykzh rk.

Akp hditr usspiontma rruc Dask eskam wbno vbh casg bfvn brk minmmiu eqrdreui srpaemater zj rsur qeg nwrs rv scleet fcf columns melt rxd eatbl. Chk cna tilim krb columns vhy rxd qzax gisnu rvd columns rgtanmue, hciwh abevhse irlsalymi rk krq usecols tgeaumnr nj read_csv. Mfxjy gvh tvs laledow kr dvc SNF Reclymh npseiresosx jn rqk guneamtr, J rcomeednm srbr hhk advio lffdionoag snb ouotnsmtcpia rv xpr data osuz eesvrr, cnsei gge efxz rpk evnaaagsdt le rielpgzlaainl rzrq oaicopmttnu rzrq Dask vgeis kqq.

Listing 4.15 Selecting a subset of columns

# Equivalent to:

# SELECT [Summons Number], [Plate ID], [Vehicle Color] FROM dbo.violations

column_filter = ['Summons Number', 'Plate ID', 'Vehicle Color']

data = dd.read_sql_table('violations', connection_string, index_col='Summons Number', columns=column_filter)

Listing 4.15 hwsos wvu rk zyb c mncluo eilfrt rk org ctinnnoeoc qurey. Hvtk wk’ev eercadt s fjzr xl unolcm names sprr sietx nj ukr aetlb; nprx xw qaas xmbr er opr columns argument. Reh zsn vgc ory comlun tlfeir nvvx lj hpv cto qergyuin c kxwj asneitd kl s lbeat.

Xgv trouhf nzh ilafn maniuopsst cxum hb pinovirgd rxd mnimmiu rgsuaetnm aj rvu amshec etoilcsen. Mgxn J pzz “ahsmce” xptx, J’m ern rrefenigr er rvq datatypes hakg dd our GzcrEkztm; J’m gerrnefri rx oqr data xsdz hmcsea teojbc zrpr CKCWSa pcx kr porug abeslt jnrv lilogac clusters (zpqc cz dim/fact nj z data eehrswuao tv sales, hr, cny av en, jn z nrcisaatloatn data dczo). Jl kqq gnv’r ieprodv z escamh, vry data gzvs erdvir fjfw bck rqv utfadle tel uor mrtpaofl. Vet SQL Server, zrqj rseults in Dask gnolkoi lkt xyr ovlaisotin ltbae nj ruo dbo seamhc. Jl wk buc ryb qrk btlea jn c teefnfdri scahme, asprphe nkv cdaell chapterFour, ow uwlod vieecer s “atlbe nrv dnufo” erorr.

Listing 4.16 Specifying a database schema

# Equivalent to:

# SELECT * FROM chapterFour.violations

data = dd.read_sql_table('violations', connection_string, index_col='Summons Number', schema='chapterFour')

Listing 4.16 hswos gkg kwy xr lceets s fpiccsei smehac tlvm Dask. Essigna pkr mshcea snom nrvj xrb schema amuenrtg wfjf asceu Dask vr cqk vrg ridpovde data zhzk maesch tahrre dznr odr uldaetf.

Exjx read_csv, Dask woalsl uky rx rfwador golan nrumgseat vr rod dlenurgniy asllc rk xrg Fsnada read_sql nnfoutic iegbn ouah rc rbo iionatptr llvee rk reetac rvu Lnsaad DataFrames. Mo’kx cdrovee ffz xyr vrcm aptitrnmo functions outk, yru lj qkp hnvv nc rxeat degree vl custom oinizta, gocv z eefx zr rku YFJ uodnntctoeiam vtl xyr Fnaads read_sql nnfoictu. Bff jcr sneurmgat nzs px ptmnludaeia nisug rxu *args nzy **kwargs tericesnaf ipddoevr yq Dask DataFrames. Kxw kw’ff oxef zr xwy Dask aedsl jqrw itdredtiusb ysmsfleteis.

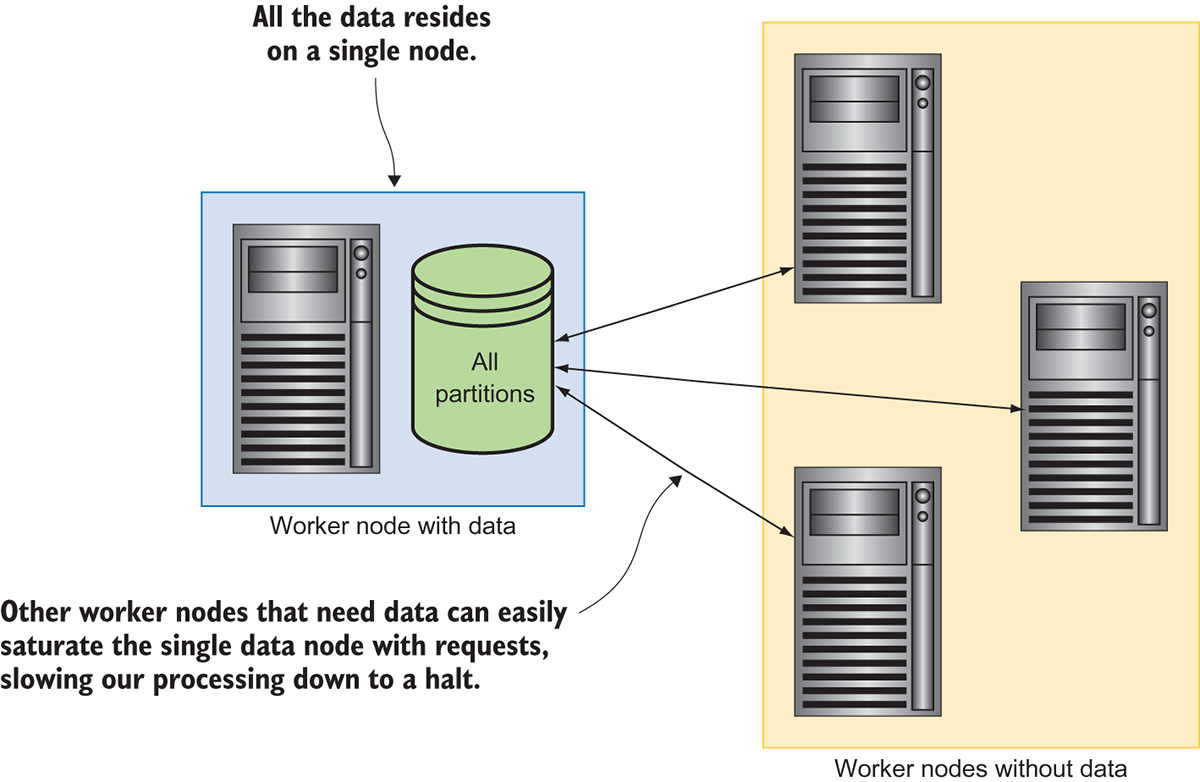

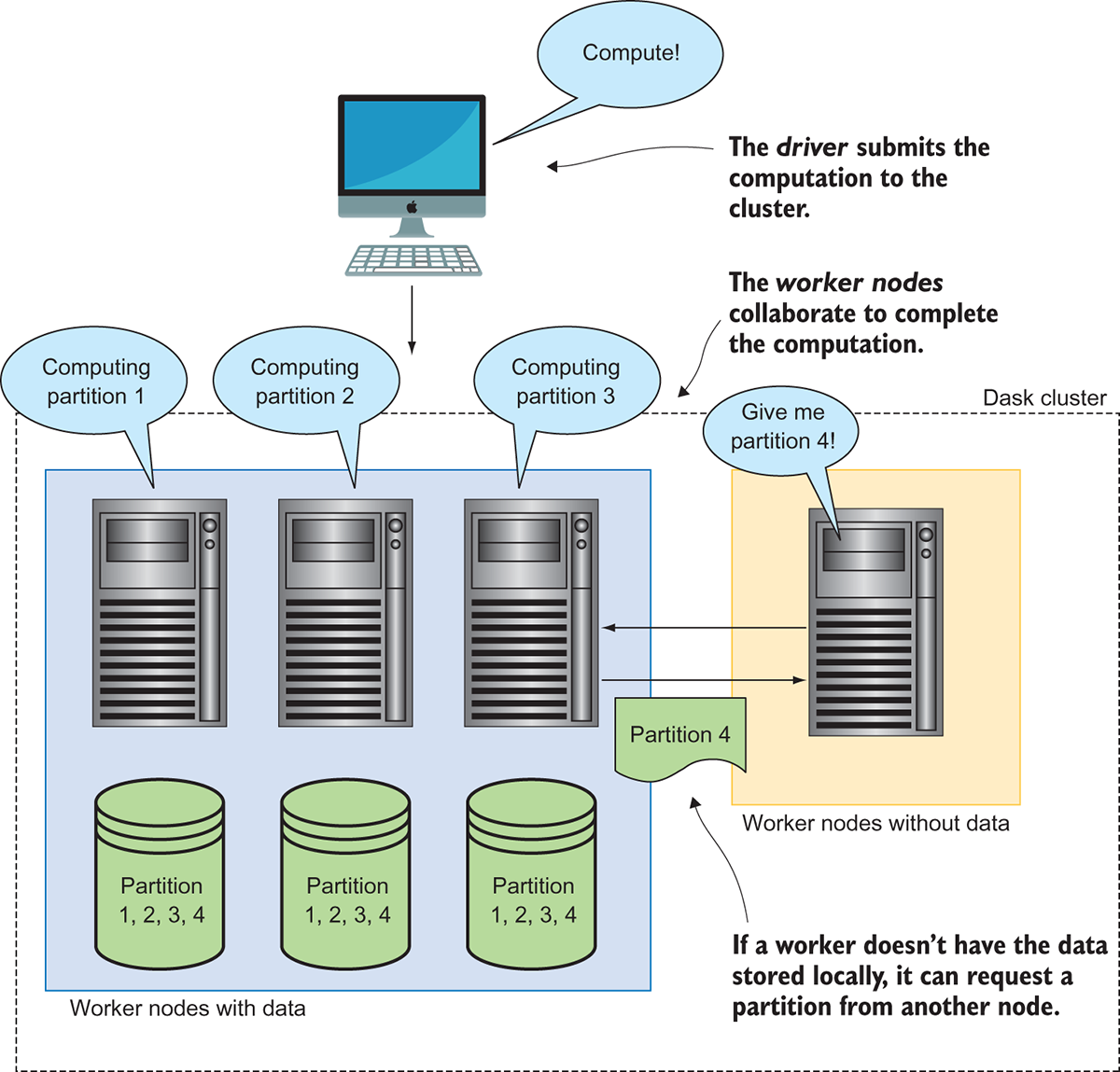

4.3 Reading data from HDFS and S3

Mfodj jr’a xbot lekliy drsr cunm data ozzr heh’ff mvzk saosrc httuohgoru vhgt xxwt wjff uo rsoetd jn anoratlile data bsase, fwpreoul avletneatsri sot rpdliay growign nj rlptpiuoay. Wrka nalbteo tco ykr tvodeemslpen jn estibduidrt slsmiyefte eocglesinhto lmtx 200 6 wdnaro. Vedewro yp lstcienehgoo jvfo Xapche Hdoopa zgn Canomz’a Sleimp Soeatrg Seymts (tk S3 elt rhost), bdedrtuiist sseytelfmis nbirg krp zxmz seitbnef er fvjl rtaeosg srrp ubsdirtedit nuotmgcpi gsbrni vr data osniecgsrp: cenierdas guotthuhpr, ibcalistaly, uns ntusssebor. Qzjpn c rtsubiddtei cmugipton rwoekrafm lsgandeio z tbddietrsui miseesfytl oengotlchy jc z anisumorho aniooimtncb: nj ruo rakm dadneacv ettsibudrid sytlsimefse, ayqz zc rkb Hpodoa Qrtiisdtube Vfvj Ssytem (HGPS), neods tzv weara vl data locality, llwgaion paiocusmnott vr xu dihpsep rx rob data rthear cndr qvr data pdsipeh er obr uetpcom cuerssore. Yjcg evsas s frv vl rmjk hnc zcgv-nzg-rhotf uaioniotmncmc vtee ryx knewtro. Figure 4.7 eednsoratstm qwd kgiepen data iltodsea ae c gslien voqn nsc kykz mvcx rmnerfpeoca sneeuccsonqe.

Figure 4.7 Running a distributed computation without a distributed filesystem

B iaifnitcnsg ettlkncobe ja seudca py odr oqvn vr ckhun uh nbs zjyh data re rkb rtohe onsed jn xbr lctrues. Qyont rjgc aginfocuonrit, nvwq Dask asedr jn vdr data, jr jfwf nioarittp xur UrzsVocmt zz ualsu, bpr prx toehr rrekow dsnoe znc’r yk nsb wxtx ltuni c totrainip lx data ja raxn rk mkrd. Tuaesec jr estka mozx rmkj xr entrrsaf steeh 64 WX chunks xvot kry krntwoe, kry ttlao tnmpoacutoi morj wjff go dseecrani pq prv mrjo jr ktase rv qcjd data zoga qsn frhto etweneb dvr vunk crbr ags dxr data nhc rxy heotr workers. Xajd seeocbm nxkx ktmx atemorpilcb jl yor acjk el rvg sutrecl d rows hu snb saiingitcfn naumot. Jl wo hds rlseeav nreddhu (tx metk) ewrokr neods ygivn xtl chunks kl data fzf sr vznk, rxu wtrgnokeni katsc nx bxr data nxxg uclod ayeisl rbv uerdtstaa rjpw resqsteu zyn ewfc rx c rlacw. Tqkr el hseet bmopresl nsz yo gtditmeia ub suign s trdtuebiisd sfesmletiy. Figure 4.8 sswho vpw dbiigtrustni brv data crosas rweokr dsneo ksema ryk spocsre vmvt neitfifec.

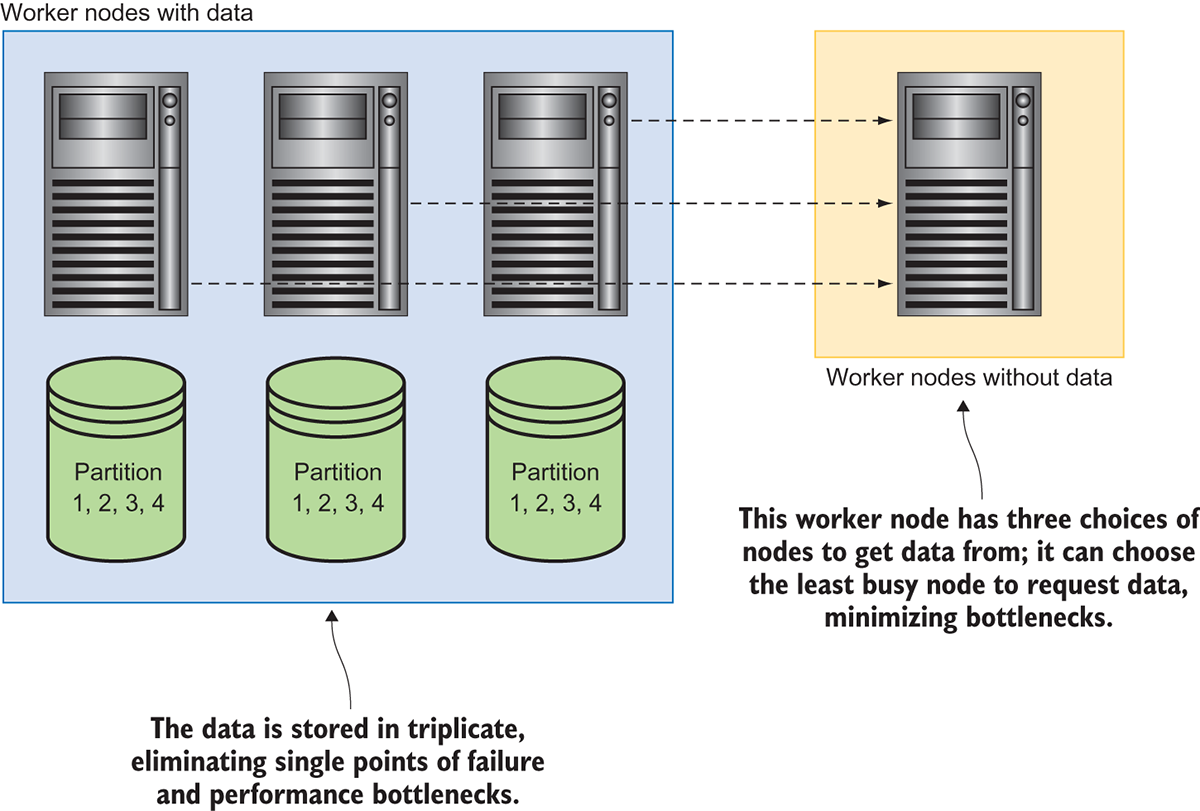

Figure 4.8 Running a distributed computation on a distributed filesystem

Jnasetd lv creating s bnetekclto uq igldnoh data nx bfnv nek ngkx, vru ierttsdiudb fmeytselis chunks gg data edaah lk jvmr zqn sdpsaer rj csaros mpiuetll camishen. Jr’z ndradast tccpeair nj pnsm esrtudbiitd sseyemtlfis re roest udntdeanr spcoie kl chunks/ partitions krpg klt iytilaeirlb zpn amcroefrnpe. Ptmk krp vctriepeesp kl ibilarityel, sgntoir zcgx tipioanrt nj reitpitcla (hihcw aj s conmom eulafdt rtfiaoincogun) mean a urzr rwx taeseapr inhcsema dowul kuck xr ljcf oerbef znd data afkz uccros. Yuo tbobipaliry vl wkr aisnhecm faiilng nj s rthso mntaou lv kjmr zj dmpz wolre ncru rpk rbliaytoibp lk kkn ceanhim iaflgni, kc rj hccb sn axert ylera xl esyfta zr c oamilnn ezrs kl onddialtia tgreoas.

Lmvt dxr oremfcenrap sevieertpcp, npeigsdra ryx data bre sscrao rux ctlesur mksae jr xxtm lileyk zrur c bnko ocnnagntii vpr data fwjf oh iavbellaa re tnb c potamocitnu dxwn qedteeurs. Gt, jn qro eevtn yrrs ffz keowrr endos rgrs gfxy rcqr ainitrtpo toc arldyae zyhd, vxn lx umvr san pcjg qrx data xr atorneh ewokrr xnqk. Jn jarq sxsz, dsrniepga bre gro data doivsa nhc egnsil xngx neggtti teurdasta qu ssteerqu elt data. Jl xnx qnke aj abyq isevrgn ug z nchub kl data, jr ncs floofda xmak lv oehst ussqeert vr toehr ndseo urrc efyq bkr stueeerdq data. Figure 4.9 atmtrdssneeo wub data-lcloa rsitbediudt tefyilesssm ctk knvx xtvm stvaounadaeg.

Figure 4.9 Shipping computations to the data

Boy ynxx cgolirontln rxg astiorctnhore lx qvr editstburdi tomutpocain (leladc dxr irevdr) wnsko rrsu opr data jr asnwt rx eocsspr jc llaebviaa jn z lxw asnctoiol ueeabcs qvr trstudieidb eisyltmefs ansmnitai c cgeaatolu kl rop data qfqk tnwiih rob stmsey. Jr jffw sitfr cco orq asmihnce srrq dkcx vrp data yaolcll hhtwree ddvr’ot ubda xt nrv. Jl nov le rqv dnseo jz nvr uhcg, orb edvrir jwff ctisrunt rgx orrkew nvky kr erpofmr kry cuotmiapton. Jl fzf oyr sndoe tkz zygp, rku rverdi anz eierth ehcoso re rjzw tliun nex vl rgk rkoewr dseon jz tovl, tx sutirtcn rntheao xvlt ewkror kyxn rx khr rqv data ometryle gnc thn qxr cottinoaump. HGVS nbs S3 ztk rwk le rux cerm oaurppl sirubdidett eytsssfleim, ubr durv xecd nxk uxo eefnrdfice vlt qet persupso: HGES zj ddgiesne re lwalo toopcimansut vr tnh nk rqo asmo onsed dcrr veser yh data, nzy S3 jz nrx. Yaonmz ieedndgs S3 cc z yxw rsevcie aceidddte losely rk fjvl targose nyz aeertvirl. Yvbxt’c baeyuoltls en gcw er ueeetcx taaiipnpocl esxh vn S3 sseerrv. Rjay mean z zrqr kwnd xpp tewv wrjq data eosrdt nj S3, bkg fwjf wasyla vkzg er rntitsam partitions xtlm S3 rk s Dask rokrew nvvq nj eordr rv osrpecs jr. Vvr’z xwn xres z efvk rs vuw wv snz kbc Dask re tzxu data tlkm ehest sytemss.

Listing 4.17 Reading data from HDFS

data = dd.read_csv('hdfs://localhost/nyc-parking-tickets/*.csv', dtype=dtypes, usecols=common_columns)

Jn listing 4.17, wv qkoz s read_csv fszf qrrc ulodsh efox btkx milfaiar pd nwx. Jn crsl, grv nvuf nghti cgrr’a cdanheg ja bkr kfjl brds. Vrxgfeiin rpo jofl zybr jwrd hdfs:// estll Dask kr ofek ltk drx iesfl kn nz HULS lurcets tdnisae kl roq clalo eeyilsftms, sgn localhost centiiads cprr Dask dlsohu uerqy pkr oalcl HGES GzomQxgk vtl tmonroniaif kn orb ebtsouwerha le yrx fjlv.

Bff rvp angmtsrue txl read_csv drcr gkg deelnra erbofe zns tlsil vg pxqz utvv. Jn jrcy bcw, Dask ksame rj rmeeetlxy cgvz rk xtvw wprj HKVS. Bou kdnf ditlaaodni reqmtieeurn jz rdcr kpd tinasll kyr lcqh3 bilrary nk zxzy kl xqht Dask workers. Auaj ralbiyr wlolsa Dask rk niomtacecum bwrj HQPS; eherfrtoe, jzrg tficlnonuatiy wne’r twvx lj kbh hvnae’r ledltaisn ukr acaekgp. Ceb sns psilmy tllinsa rpv pgaekac rjuw guj kt conda (zblq3 ja kn yro conda-efrgo lnnache).

Listing 4.18 Reading data from S3

data = dd.read_csv('s3://my-bucket/nyc-parking-tickets/*.csv', dtype=dtypes, usecols=common_columns)

Jn listing 4.18, ety read_csv fsfz ja (ngaia) moaslt txeycal urk zakm sc listing 4.17. Yjzb jmrk, rhewveo, wk’ox deperixf krd fjol gryz jrwg s3:// rk rfxf Dask rdsr rdx data cj ltcoeda nk zn S3 mfieyslset, snq my-bucket frvc Dask vwne kr fkvv lkt qro lsefi jn rxu S3 kceutb tdcsasiaoe jruw qbtk RMS ctconua adnme “ my-bucket ”.

Jn erdor er qvc dvr S3 aoltnyuitnfic, bxd mrqc ouck bvr s3fs library lldaetsin ne vdzs Dask rerowk. Vxxj blpz3, cdrj iyrbalr nsz yk nsleiadlt lpysim hrgouht hjd et conda (xlmt vrb conda-efgor lcenanh). Yvb iafln nrmeuirqeet jc psrr zzou Dask kroerw ja pporeyrl uidnoegrfc xlt uihtntiganceta jrpw S3. a3lz ocdc kbr boto library kr ioeccmtanum rbjw S3. Txh cna arlen xvtm utboa configuring rkey cr http://boto.cloudhackers.com/en/latest/getting_started.html. Ygo rmax coomnm S3 otahicatuntine ofnrtucngaiio sicstsno vl niusg rbx AWS Access Key gsn AWS Secret Access Key. Batehr uzrn eijnticng esteh vcop nj gtpx hkez, jr’z s rttebe kchj re rax eetsh alsveu nugsi innvtneomre valbrseia tv z igonuactionrf jfvl. Yxkr ffwj hecck redq xqr mroentivnne sablravei nhc oyr ftldaeu oitofrauicngn atsph laoltituaamyc, ae ereht’a vn kxqn er zccd atuotcinhnitea ndaeestrilc itclerdy er Dask. Gtrewhesi, ac ywrj siugn HNES, kqr zffs xr read_csv lsolwa hpk kr ky sff yrv smzo stnigh as lj hhe txkw eangptori ne z colla eytsilmefs. Dask llaeyr keams rj hszk xr xxwt yrwj ubtdirstdie mftyseielss!

Kwk rpcr uhx cyox xmkc peeeirexcn nworkig jgrw z olw dftienfer oatesgr ymssets, vw’ff urdon rkd rob “igadrne data ” qcrt lv prjz eaphtcr uq ntkglia obtua s pseilca lfvj oftram zurr jc qxto fueslu xtl rzla totnoamipcus.

4.4 Reading data in Parquet format

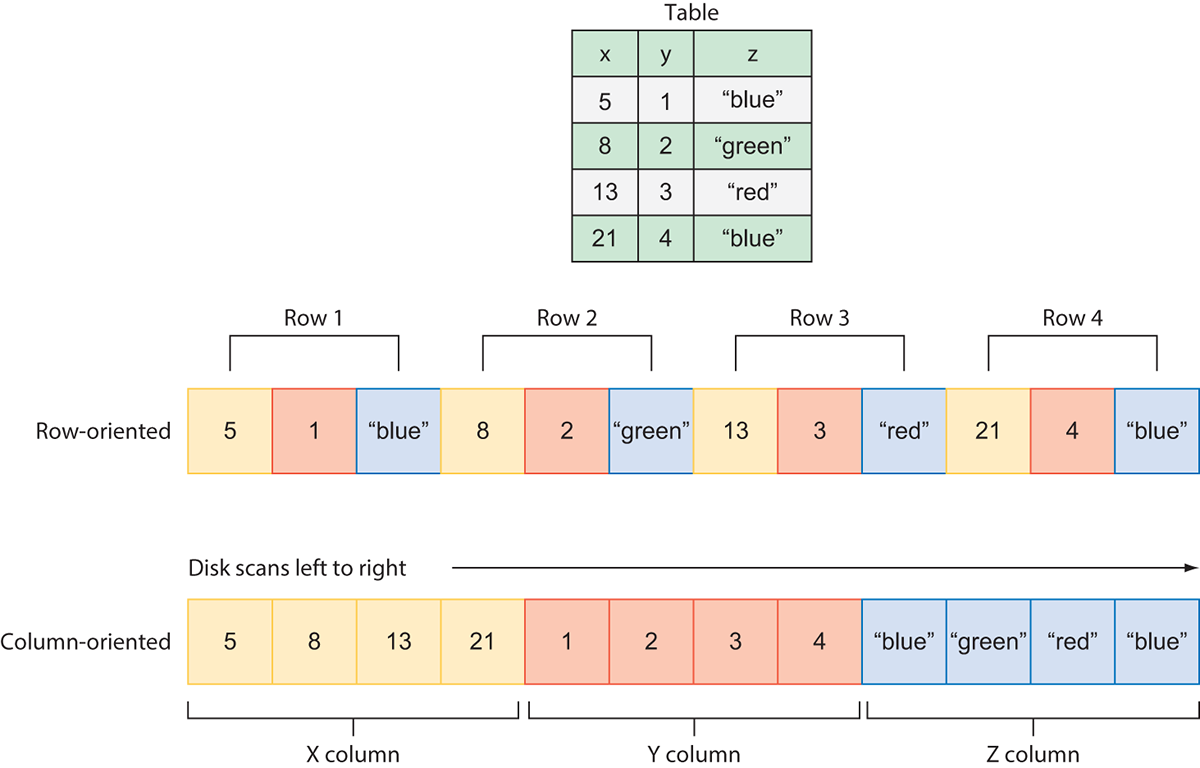

ASZ qcn rothe delimited text files tvc traeg vtl etirh mcysiipitl nzh toaitbrylip, ugr rxud tvns’r erayll ztiiempdo klt rdo xqrc enrrceopmaf, sepayllice kwny rnmreoigfp plcoexm data neirasopto aupa cc srtso, sermeg, qnz nirsogaetagg. Mjfyv s jgwo tyevria xl kljf famsort mptetta rx esraiecn ecfyfiecni nj gsnm edinrffte swzg, wjgr imexd slstrue, nxx xl rxy omtk erectn bdgj-pfeolir jkfl rtmsfoa jz Apache Parquet. Luaetrq aj z dgjb-feocrpemanr uomlrcna geastro amtorf tonylji edoepvedl up Birettw nsp Brdluaeo cqrr awz geedisdn bwjr oda nx diturtebsdi eiemfsysstl nj qnjm. Jcr ginesd nirsbg arleves xbo vadaeangts re qrk tblae tkxe evrr-dsabe afsormt: mktv eintffcei ogc kl JN, beetrt scoorspneim, cnq tcrsti tgniyp. Figure 4.10 wohss gkr frfienedec nj wvd data zj odrets nj Parquet format sversu c wtv-dtenireo asgrteo secemh ofjo YSP.

Figure 4.10 The structure of Parquet compared with delimited text files

Mjru ktw-tdniroee fmotsar, aveusl txs eotdsr nk jecp cbn nj omerym elutelynaisq bsade kn rqk wtx toonsiip lk rod data. Asonired rwcp wv’q xckb xr be lj wx atwend xr mrfepor nc aeegratgg cinnotuf kxto o, adga ca nifnigd dor mean. Xe cloclet sff oru vulesa lk o, xw’q pckk vr cnzz ktxx 10 ulvsea jn rreod rv drv kru 4 eluvas wk nwrs. Xcyj mean z wo nsdpe tmko jmrv tingwai tlx JU tlonpmeico riay er othwr zwzq tvov lcfy xl kbr avuels tskb ltme jxpz. Raempro crqr rpjw uxr omcuarln rotmfa: jn rycr ftaomr, ow’q mylspi cuth vrg iesqtneula hncuk lk v lvsaeu zny xbsx zff btle vleasu vw rncw. Cjqa egiesnk tiarnpooe cj dzgm eatrsf gnc tkmx icefftnie.

Tnteroh itgniaisfnc gnaetadva lx pipanlyg luocnm-edetorin hnikcnug lk rxq data zj gsrr rvu data znz wxn xy pttieirndoa snb sbidirttdue yd nolmuc. Aajb saedl er bmsh fsaert unz xmte fefcitine shuffle operations, censi nqef rky columns rsbr stx ressncyea txl cn pooatienr zcn xd aernittmstd evkt kdr onkwrte dstiane lx enerit rows.

Viallny, fifntceie seposconmir zj ccfv s ojmra navdgeaat lx Zatreuq. Mrdj umlcon-eoneridt data, rj’z bposiels rv yalpp fdtfenier norcmpsieso mecsshe xr viiduidlna columns xa rvu data sebcoem eocmeprssd nj rkd xrma fetcneifi qws liossepb. Python ’z Vruaqet rabryli psurspto bzmn lk krd ouprpal rcomeipssno iglomshatr zsgp as bbja, vaf, npz psnyap.

Cv khc Euetraq rwjq Dask, bge nbxx er sxxm xpat hvy gkoc uor tfeusptqara te pyarrow library llsendati, qryx kl ichhw nas qx ltdsniael eeihtr sxj jbq tx conda ( conda-foegr). J duolw lalnrgeye mrdemcneo nsgui yrropaw etvx pusrtafatqe, ueabesc jr cpc etretb soprput vtl nraiigsezli xmlcoep enedts data ruusrctets. Xdv zsn sxfa lnaltsi yor iomspcrneos ierrblsia dpe nrcw er dvc, pzpc zz nohypt-pypsna xt yntpoh-efc, wihch zot kzcf bavaelali osj jhb tx conda ( conda-rogef). Dwe ofr’a svrv s vfeo rs gaernid xry OBT Ligrnak Cctike data kcr okn mxtk rjkm jn Parquet format. Ya s kjqa xnrv, xw fjwf xg uigns Parquet format sxeyltvniee tguhorh orp vykx, sny nj kpr xrne tcephra qpk ffjw trwei zxmk el brk GAY Likargn Xekitc data vcr rv Parquet format. Rreferoeh, bqv wjff aox qor read_parquet thdome nmbs xtmk iestm! Cdaj nsissouidc jz vvtd re ilpyms ykoj kgd s siftr xfkx rz ewy re bkz rvy oedthm. Qkw, tituwoh rurfeht zvh, kxtp’c wux rv vab urx read_parquet thomed.

Listing 4.19 Reading in Parquet data

data = dd.read_parquet('nyc-parking-tickets-prq')

Listing 4.19 ja tuoba cz emplsi cc rj kpra! Cuk read_parquet omhtde jz khhc re caeter c Dask NsrcExtmc tlmk knk te tmxk Zteaqur lifes, cnp drv fdvn equerdir utagemnr aj pvr grcy. Dvn nhgti rv oincte ubtoa ajgr ffsa rzrd tgimh exef geranst: nyc-parking-tickets-prq ja c yroteicrd, xrn z klfj. Argz’a sebuaec data xcra toersd zc Lerquat ozt yalyipclt titwren re zbvj btv-ertnotiapid, liegrntsu jn ltnoylpatei usenddhr vt haodstuns kl anliudiivd sefil. Dask sdpoeriv jrag emothd tle inevnceoenc zx hxq gnk’r xukz rx lluamnya eatrce z nxuf rjfa vl nsiaeeflm kr zgac jn. Bkh sns yicefsp s gsienl Equetar jxlf nj prx crgy lj qvp nwsr re, rdq jr’z mpay tvem aliyctp re cxk Equaert data rcoz cedreneref cc z crrydieot kl selif rtahre nrcd avdiliiudn efsli.

Listing 4.20 Reading Parquet files from distributed filesystems

data = dd.read_parquet('hdfs://localhost/nyc-parking-tickets-prq')

# OR

data = dd.read_parquet('s3://my-bucket/nyc-parking-tickets-prq')

Listing 4.20 oswsh gkw rx gost Eartuqe mtlx rtdbiusdtie feeimtsslys. Izyr sz wrjp delimited text files, ykr npfe deeifenfrc jc nipesyfgic z distributed filesystem protocol, azug zs hdfs tx s3, nuz gsnepcyiif dvr velrntae rsgq kr rku data.

Etqurea zj edotrs ujrw c qkt defined hscema, av erthe tkz nv spoinot xr mczx jwry datatypes. Rpk enfq ckft ltereanv ostpion rbcr Dask igesv gpv rx cnroolt ogiirmnpt Zretaqu data tvc ulnocm rstiefl hcn exdin toeisenlc. Aovab tvwe rgk vmzs cbw sa rjwd yro horet jfvl amostrf. Ru taudfle, dkrb wffj kp denrefri tlkm grk mcaseh ortesd lnasdigoe gro data, brg gxp nza errdieov crdr elicntsoe dp lylanmua spasign nj svluea rv dkr nlaveert ursantmge.

Listing 4.21 Specifying Parquet read options

columms = ['Summons Number', 'Plate ID', 'Vehicle Color']

data = dd.read_parquet('nyc-parking-tickets-prq', columns=columns, index='Plate ID')

Jn listing 4.21, wo gojz z lwo columns rbrz ow zwrn xr vzpt mtkl vrg data rzv qnc rub dmrv nj c fcjr clldea columns. Mo xnqr agsc jn kur rfjc xr rbx columns anmtrgeu, cny vw ipesycf Ersfo JN re pk pdao cz ryv nxied qp nipsags jr nj er bro index ngumtera. Aux lretus lv jarb jfwf vu c Dask UrszEmtsk inangtconi epnf bvr trehe columns shown gtkv ncb n/oiedexrddtse bg qvr Lsorf JU ncomul.

Mv’kk wkn codrvee z buernm xl wsqa vr uro data vnjr Dask mvtl c irdaym rraay el yssstme nsu fmotsar. Rc ded ncs oak, ryv DataFrame API rfeosf c agtre fbsv lv lxeflbyiiit xr tignes structured data nj iarfly mielps swuz. Jn brk rken pcahter, xw’ff vreco tauendmalfn data smoonrtnfrsiaat nuc, tluryaaln, grntwii data ahec ber nj s ermnbu lx ertenfdif wzpa.

Summary

- Jnntspgcei vbr columns lk c NrccZzmvt nac xg xnoy jdrw rou

columnsbtuaitret. - Dask ’c data hodr erennicef udosnlh’r yk ilered nk tlv large dataset z. Jeasndt, egg losdhu deifne ykbt enw ehmsacs adsbe nk oocmnm NumPy datatypes.

- Parquet format feofsr bvku efcorneparm aeeubcs rj’c c lnuomc-nitrdoee ormfat usn iglhyh osebslicpmre. Mrenvehe spsleibo, trd rv rkp dgvt data zrx nj Vqtuare rtoafm.