chapter ten

10 Combining building blocks to gain more power: Neural networks

In this chapter

- what is a neural network?

- the architecture of a neural network: nodes, layers, depth, and activation functions

- training neural networks using backpropagation

- potential problems in training neural networks, such as the vanishing gradient problem and overfitting

- techniques to improve neural network training, such as regularization and dropout

- using Keras to train neural networks for sentiment analysis and image classification

- using neural networks as regression models

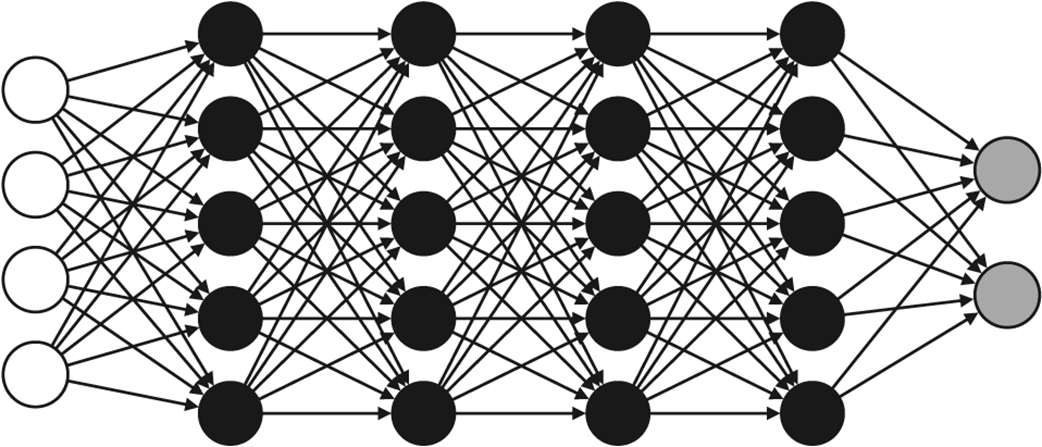

In this chapter, we learn neural networks, also called multilayer perceptrons. Neural networks are one of the most popular (if not the most popular) machine learning models out there. They are so useful that the field has its own name: deep learning. Deep learning has numerous applications in the most cutting-edge areas of machine learning, including image recognition, natural language processing, medicine, and self-driving cars. Neural networks are meant to, in a broad sense of the word, mimic how the human brain operates. They can be very complex, as figure 10.1 shows.

Figure 10.1 A neural network. It may look complicated, but in the next few pages, we will demystify this image.