2 A Tiny History of RLHF

This chapter covers

- The three eras of RLHF’s recent history

- Seminal models and papers that shaped RLHF

RLHF and its related methods are very new. We highlight this history to show how recently these techniques were formalized, and how much of the documentation lives in the academic literature rather than textbooks. Some details covered here will change, but the core practices are stable. The papers listed here also showcase why the RLHF pipeline looks the way it does – some of the seminal work was for applications totally distinct from modern language models.

In this chapter we detail the key papers and projects that got the RLHF field to where it is today. This is not intended to be a comprehensive review of RLHF and the related fields, but rather a starting point and retelling of how we got to today. It is intentionally focused on recent work that led to ChatGPT. There is substantial further work in the RL literature on learning from preferences [1]. For a more exhaustive list, you should use a proper survey paper [2], [3].

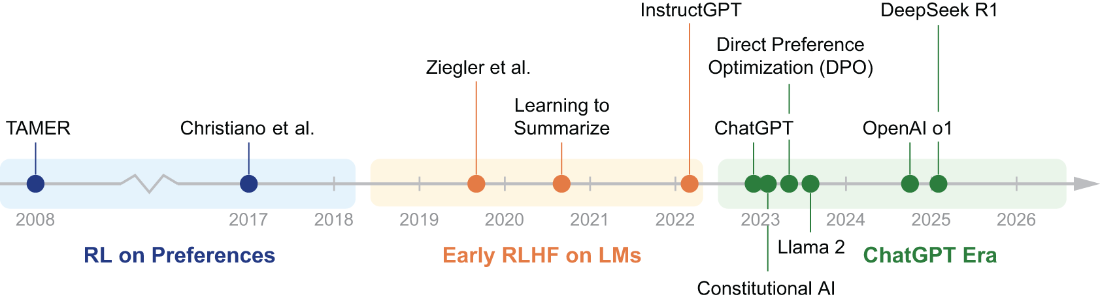

Figure 2.1 Timeline of key developments in RLHF discussed in this chapter, from early work on RL from preferences through the adoption of RLHF in large language models.